After a decade of producing electronic music, I understood that a great mix is about creating a sense of space. When I transitioned into object-based audio (like Dolby Atmos), I encountered a paradox: While I could place a vocal perfectly in 3D space using precise coordinates, the next step getting the reverb right felt like stepping back into the Stone Age.

The Bottleneck of Spatial Incoherence

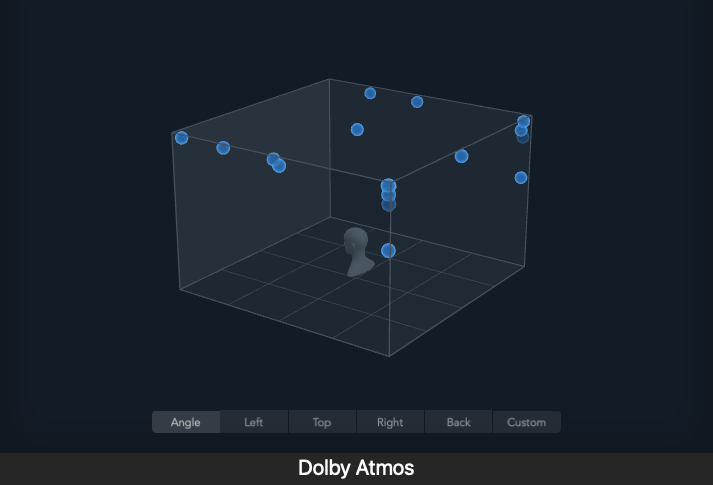

In spatial mixing, we use digital metadata (x,y,z) to define an object’s location. However, to make that object sound physically plausible far away, close, or high up the Reverb Send Level must be manually adjusted to match that coordinate.

I discovered that this manual adjustment was the core problem:

- Inconsistency: What sounds right in Scene A might be wrong in Scene B. Maintaining spatial realism across a two hour film or album tracklist became a massive, repetitive task.

- Subjectivity: The decision relied entirely on my ears, not physics. This meant spending creative hours tweaking parameters that should be calculated, not guessed.

The Revelation: If the “correct” reverb level is a function of the sound’s position and the room’s acoustics, it is an objective, solvable problem.

The Solution Concept: We could use AI (Deep Learning) to perform this complex, repetitive DRR calculation instantly. My thesis idea was born: Train an AI to map a vocal’s characteristics and its 3D position directly to the DRR-derived Reverb Send Level.

This project is the culmination of my journey turning creative frustration into a rigorous technical solution that aims to inject speed, consistency, and scientific backing into the art of spatial vocal mixing.