In the second part of my blog series from the IRCAM Forum, I will summarize 2 workshops and performances, where I found similarities and approaches that could be interesting for my own research project.

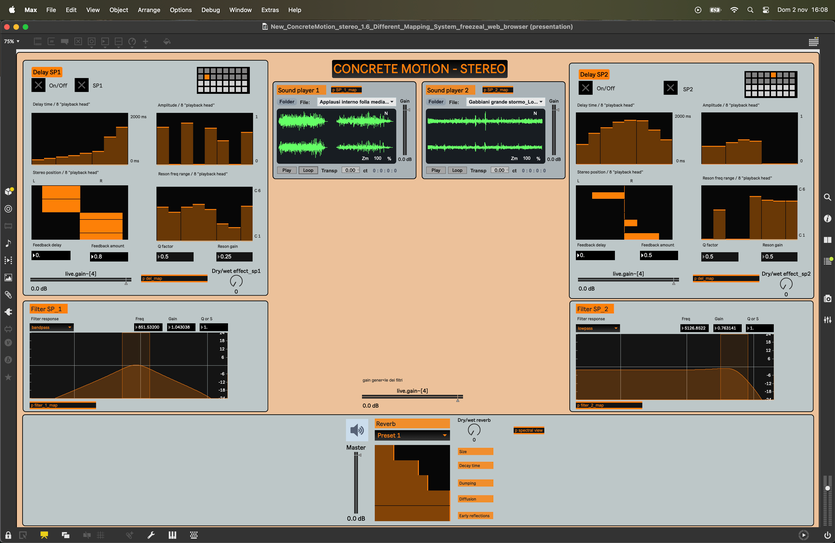

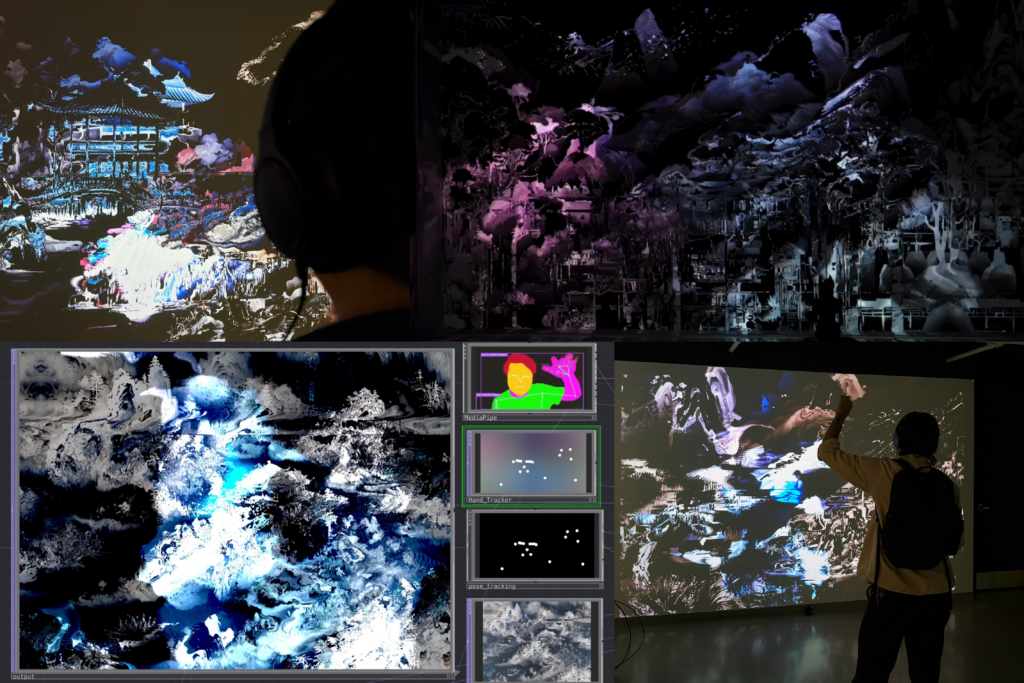

Concrete Motion is an experimental tool designed for educational settings that facilitates the study and creation of sound-based music through physical movement. The system integrates the flexible audio processing of Max/MSP combined with Google MediaPipe body-tracking, within the TouchDesigner environment. By leveraging these technologies, it establishes an interactive digital space where listening and electroacoustic analysis are mediated through the user’s bodily gestures. This approach aims to bridge the gap between abstract musical concepts and tangible, physical interaction for learners and creators alike. While the objectives in this project’s research lies in educational ambitions, the fact it is using gestural interaction to create an accessible environment, can be directly translated into my own idea of an accessible interface for my project. A main difference in the technical construction would be, that the MediaPipe Hand Landmarker is running directly through Touchdesigner. I am not sure if there is an additional latency involved or if this could also be an idea for my project. Especially if I plan to get some additional visualisation on the gestures and processed audio.

Liminal is an interactive installation that moves beyond traditional control-based models to explore a “liminal” space where agency is shared between humans and AI. In this environment, human gestures do not function as direct commands but instead serve as contextual information that influences the system’s evolving behavior over time. The architecture uses real-time computer vision and a Python-based decision layer to ensure that audiovisual changes emerge through gradual modulation and probabilistic weighting rather than immediate cause-and-effect. By distributing authorship across both the participant and the machine, the work transforms interaction into a sustained, meditative dialogue shaped by accumulated history and continuous negotiation. I visited the performance and workshop, as this is as well a similar approach from a gestural interaction to audio creation philosophy. In comparison to other projects of this theme, I found the bidirectional interaction and decision making between the human input and the models system. Maybe this could also be seen as an anecdote to how future work will, if not already, operate in all daily activities. On a technical level, the computer vision was also implemented through Touchdesigner, as in the other project mentioned earlier.

Both projects incorporate most technicalities I strive to achieve in my own project, hence why it was interesting listening to the approaches and talking about the ideas involved. While I will head towards a different end goal with my product, they are still good examples to compare the technical feasibility and workflow. All in all, our days at IRCAM Forum brought me exciting insights and takeaways from diverse fields of audio focussed research.