Last week we attended the IRCAM Forum in Paris as part of our semester excursion. Overall it was an interesting couple of days, where we had the chance to experience a lot of the latest research and techniques in various fields of audio and sound. Within this post I will try to summarise my main takeaways on some chosen talks, workshops and performances I have attended.

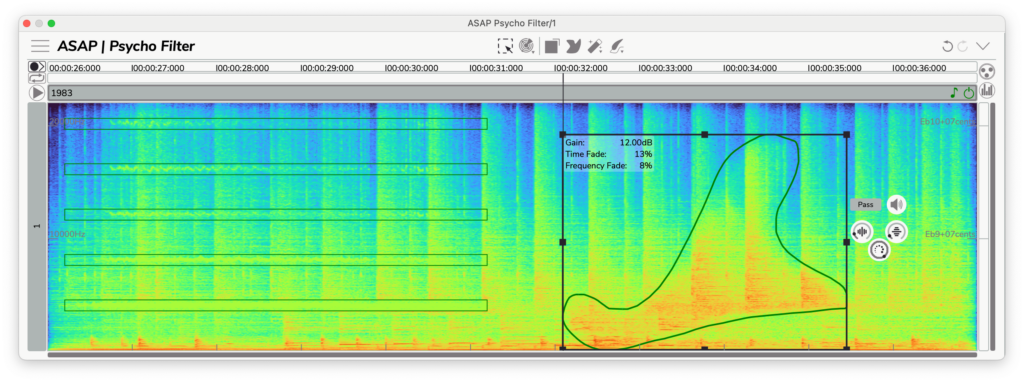

I visited a talk and workshop by Pierre Guillot, which showed updates and news from the tools Partiels and ASAP. Partiels is a software dedicated to analysing audio signals and retrieving useful data for signal processing and sound design applications. One of the new interesting developments involved a direct python integration to access analysed data directly. The ASAP tools are more a direct creative solution to manipulate audio in various applications. The three major tools mentioned would be the Psycho Filter – allowing to apply spectral filters directly to a source, the Pitches Brew – an advanced pitch and formant manipulation via interactive frequency curve editing, and Stretch Life – a time manipulation tool for compressing and stretching sound dynamically. Further notable mentions have been the Spectral Morphing and Spectral Crossing tool, which allows to combine and ‘morph’ two audio sources on the spectral domain. All together these seem to be interesting tools, cleverly designed and quite accessible for most users I would imagine.

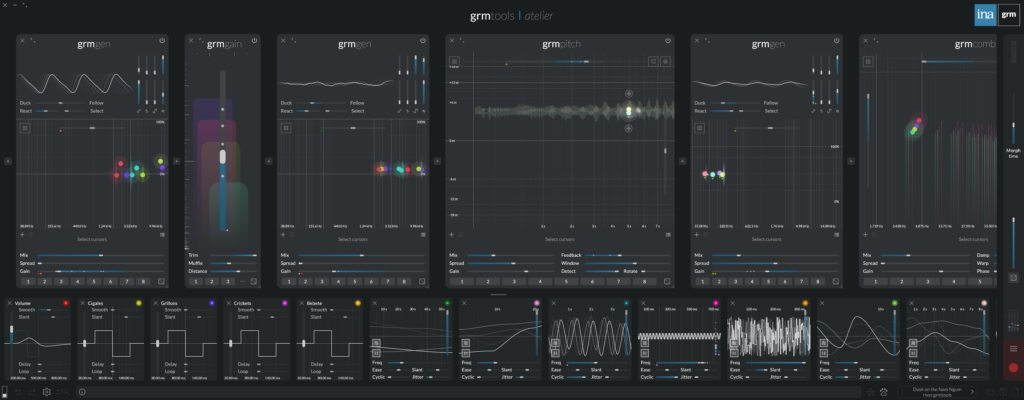

Another interesting workshop was the presentation of the GRM Tools Atelier software. It is a sound processing and synthesis environment working for real-time and multichannel productions, both in a live or studio application. I really liked the modular approach and the quick and intuitive randomisation capabilities, which allows for a fast agitation of multiple parameters at once. This can be an interesting choice for sound artists wanting to work with only one standalone software and dealing with more graphically intuitive controls than for example puredata or Max/Msp. As I already own some similar synthesizer software of a similar modular system, without the multichannel capabilities, I will for now stick to these though.

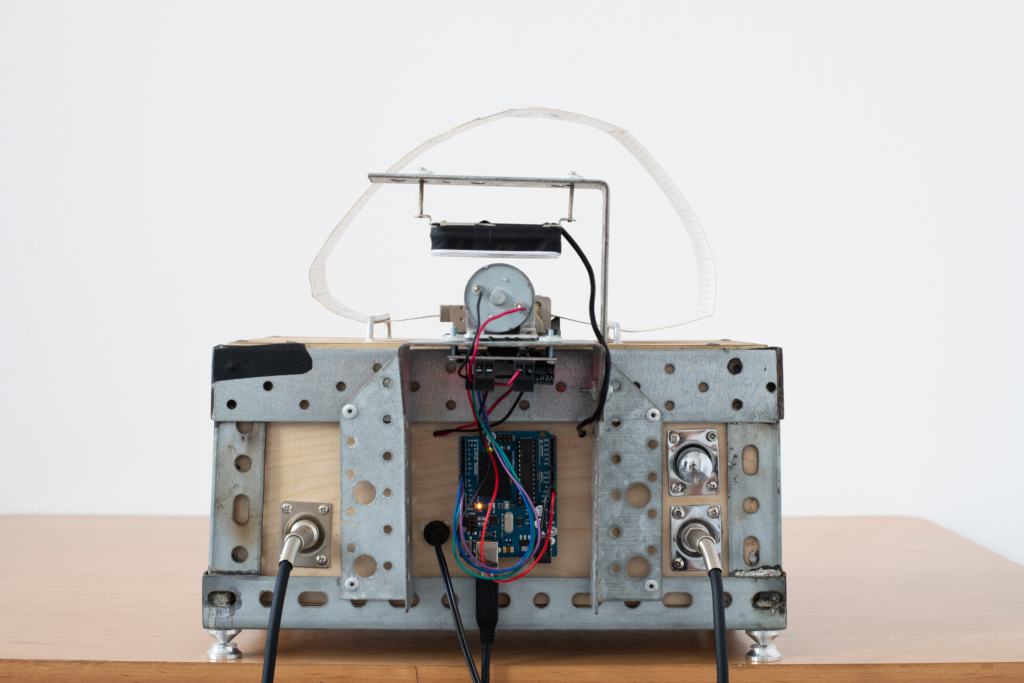

I also experienced a performance of VASE by composer Yuval Seeberger. The device is a motorized music-box installation that performs a 12-minute, semi-algorithmic composition by integrating a physical punched paper score with advanced digital processing. The system utilizes a specialized “ensemble” consisting of an acoustic-mechanical music-box, an analog motor, Max/MSP synthesis, and RAVE neural audio models to create a rich, layered sonic environment. While the structure follows a formal organization, the irregular communication between the computer and the mechanical hardware ensures that each loop cycle contains subtle, unpredictable variations. Through the use of Piezo and magnetic rail-coil pickups, the piece effectively bridges the gap between tactile mechanical movement and real-time AI-driven sound generation. I was quite fascinated by the various soundscapes it was able to produce and also how the random interaction by the composer influenced the installation.