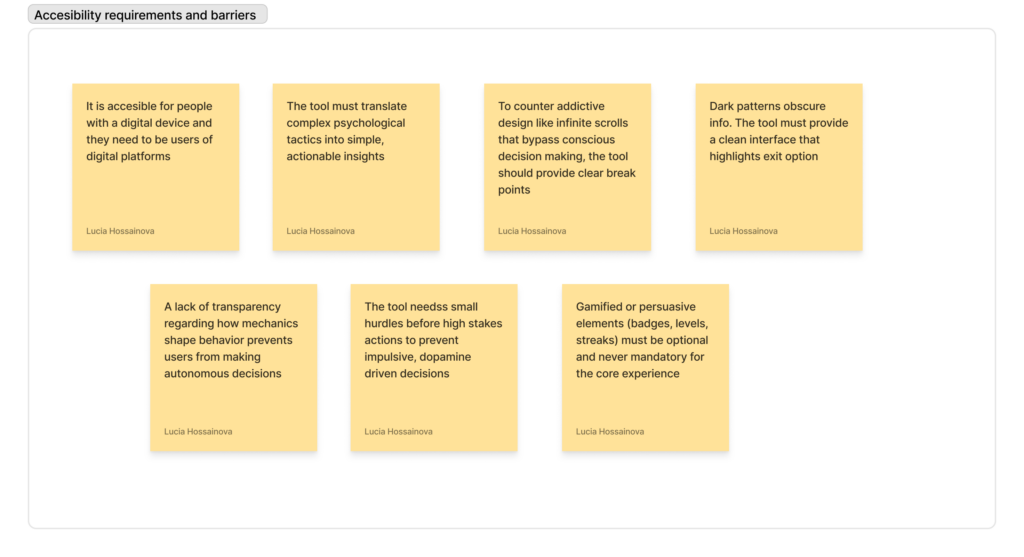

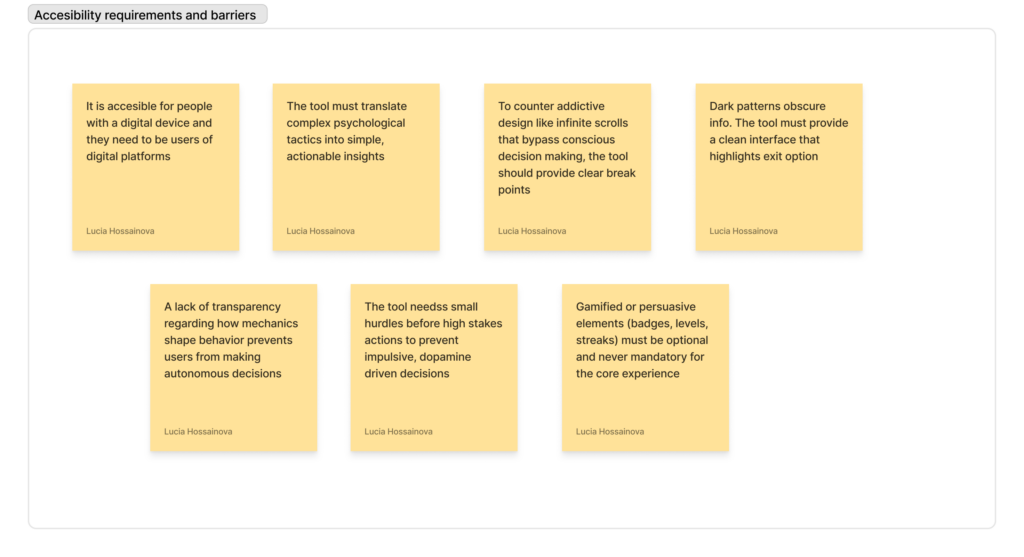

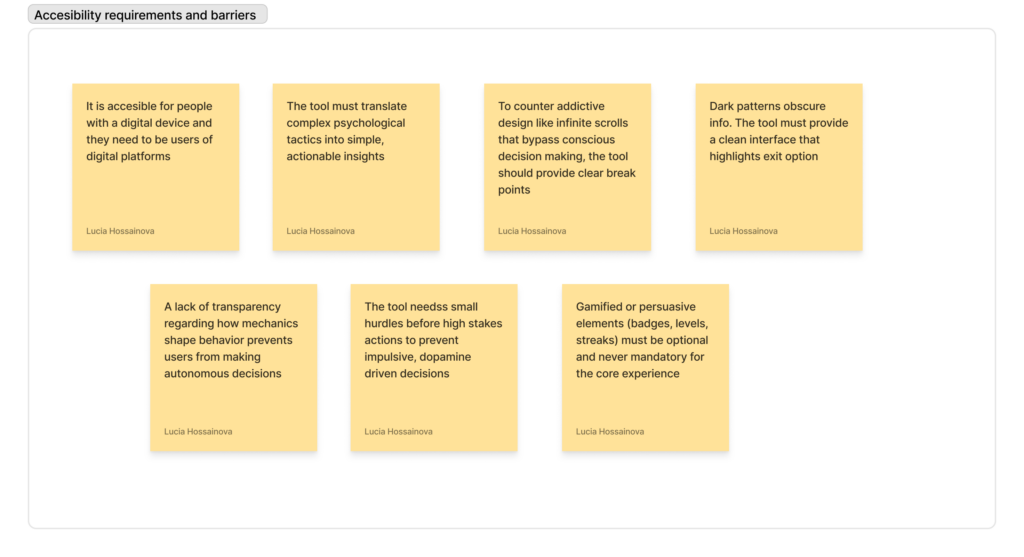

Accesibility requirements and barriers

MetaBow: Gesture Mapping in Immersive Sonic Environments

The MetaBow project investigates how an augmented violin bow equipped with Inertial Measurement Units can link traditional performance techniques with digital sound processing in immersive speaker setups. The authors address the challenge of mapping complex motion data to audio without overwhelming the musician by opting for a hybrid strategy that pairs direct mappings for predictability with machine learning for more nuanced spatial control. From a design perspective, the value lies in leveraging the deeply ingrained muscle memory of the performer instead of forcing them to adopt a completely foreign interface. This approach aims for a high level of transparency where the bow remains a familiar tool even as its capabilities expand. The use of machine learning introduces a specific tension regarding control; the system must feel responsive rather than autonomous to maintain the performer’s trust. By using the bow to direct sound within a three dimensional array, the interaction moves beyond the physical instrument to treat the entire performance space as a manipulable environment. The performer essentially uses the bow to paint sound across the room. The success of such a system hinges on managing the cognitive demands placed on the artist, ensuring that the added digital layers enhance expression rather than creating a distraction. This integration suggests a future where digital and acoustic elements are woven together through the physical gestures of the performer and the specific acoustics of the environment.

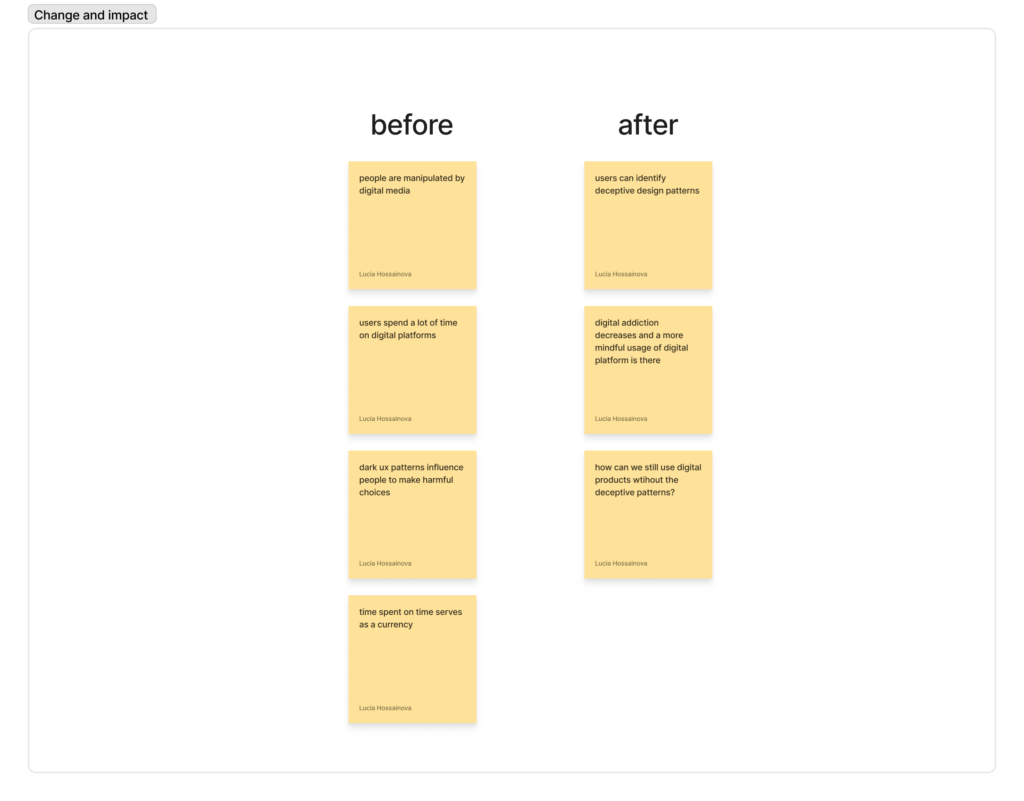

In an era defined by a constant barrage of pings, buzzes, and red notification badges, our relationship with digital products has reached a breaking point. We have moved beyond simple utility into a state of chronic distraction, where the devices in our pockets function less like tools and more like “dopamine-dispensing machines.” This shift is not an accident of poor design; it is the result of systematic psychological manipulation aimed at maximizing engagement, a corporate euphemism for the capture of human attention. To counter this, a fundamental shift in user experience is required: the move toward Calm Technology. This design philosophy, originally envisioned at Xerox PARC in the mid-90s, prioritizes human attention as a precious, scarce resource that deserves protection rather than exploitation.

To understand the necessity of Calm Technology, we must first confront the “dopamine dilemma” inherent in modern software. Most social media and streaming platforms are built on persuasive design patterns specifically engineered to hijack our reward systems. Features like the “pull-to-refresh” mechanism create a suspenseful delay before content appears to trigger a stronger dopamine hit. Infinite scroll removes the natural “stopping cues” that allow for reflection, while notification badges exploit the Zeigarnik effect, the psychological tension we feel when a task is left unfinished.

The biological impact of these patterns is profound. When we are constantly rewarded with likes, comments, or algorithmic “discoveries,” our brains adapt by raising the baseline for stimulation. This makes everyday, non-digital experiences feel underwhelming, leading to a compulsive need to check devices even when we have no conscious desire to do so. Research shows that if we were to eliminate these persuasive design elements, users estimate they could reduce their screen time by an average of 37 to 65 percent. This reveals a staggering gap between how much time we want to spend online and how much time we are manipulated into spending.

Calm Technology offers an ethical alternative by shifting information into our peripheral awareness. The core principle is that technology should only move to the center of our attention when it is genuinely necessary. Rather than demanding immediate focus through a high-stakes push notification, a calm interface uses subtle, low-resolution signals. Consider the classic example of an “enchanted umbrella” whose handle glows softly when rain is forecasted. This provides a helpful nudge that resides in the periphery; you notice it as you walk out the door, but it never interrupts your conversation or your train of thought.

From a UX perspective, this involves several practical strategies. Designers can implement “constructive friction,” which adds a brief moment of reflection before a user opens a habit-forming app, or “glanceable interfaces” that provide essential data without requiring a deep dive into an addictive feed. It also involves “ambient awareness”, using environmental design or haptic cues that respect human biology. Instead of asking “How can we maximize time on screen?”, designers start asking “What is the minimum amount of technology needed to solve this user’s problem?” This principle of sufficiency is the direct opposite of the feature-bloat often seen in products optimized for addiction.

The shift toward Calm Technology is, at its heart, an ethical imperative. Traditional persuasive design is intrinsically manipulative because it targets psychological vulnerabilities without the user’s explicit knowledge, often for the financial gain of the company rather than the benefit of the individual. This raises serious questions about autonomy and self-determination. Calm Technology respects the user as an autonomous being. It embraces “cognitive sustainability,” the idea that our mental energy is a limited resource that we should be allowed to spend on things that truly matter to us.

While the adoption of calm principles is currently slowed by business models that still reward engagement metrics, the tide is turning. Users are becoming increasingly aware of manipulative patterns, and trust is becoming a more valuable long-term asset than short-term screen time. Furthermore, regulatory bodies, particularly in the European Union, are beginning to call for bans on addictive design techniques, establishing a “digital right to not be disturbed.”

Ultimately, the goal of UX shouldn’t be to see how much of a person’s life we can capture within an app. It should be to facilitate human flourishing. By moving away from dopamine-driven addiction and toward a calmer, more respectful digital environment, we can build products that serve us rather than enslave us. Transitioning to Calm Technology isn’t just a design choice; it is a commitment to a more sustainable and ethical future for the human mind.

Sources:

Humane Tech. (2021, July 15). The social dilemma: Your phone is a slot machine [Video]. YouTube. https://youtu.be/clxm5qW3pao

NetPsychology. (2024). The reward circuit: Dopamine and the science of digital addiction. https://netpsychology.org/the-reward-circuit-dopamine-and-digital-addiction/

Note: This text was developed with the assistance of artificial intelligence for research purposes and to refine the linguistic clarity and flow of the final draft.

In the complex architecture of modern digital products, the most successful designs are often the ones that don’t need to shout to be heard. Instead of forcing users down a specific path through rigid constraints, sophisticated designers employ the behavioral-economics strategy of “nudging.” A nudge is a subtle shift in the choice environment that makes a preferred option easier or more attractive, without ever blocking alternatives or removing the user’s freedom of choice. It is the digital equivalent of placing fruit at eye level in a cafeteria, you aren’t banning the junk food, but you are making the healthier choice the path of least resistance. In UI/UX and motion design, nudging is the art of steering attention and reducing friction to encourage “better” actions through layout, defaults, and animation.

The core of digital nudging lies in “choice architecture”, the way options are ordered, grouped, and framed. This begins with the power of smart defaults. Because humans are naturally inclined toward the status quo, pre-selecting options that align with a user’s best interest, such as opting into security alerts or a recommended service plan, serves as a high-impact nudge. Crucially, ethical nudging requires low-cost reversibility. A “canonical” digital nudge, like an auto-enrollment feature, must always be accompanied by a clear and simple “opt-out” control. By making the recommended path the default, designers leverage cognitive biases to help users achieve their goals more efficiently while preserving their ultimate autonomy.

Visual hierarchy and salience play an equally vital role in shaping these decisions. By using stronger contrast, larger typography, or strategic placement for primary actions, designers can guide the eye toward the most beneficial choice. This isn’t about hiding secondary options, but about de-emphasizing them to reduce cognitive load. This management of friction is a delicate balance. We strive to remove micro-barriers for desired behaviors, like Amazon’s 1-Click ordering, while intentionally adding “confirmatory friction” for risky actions. A simple prompt asking, “Are you sure you want to delete your account?” is a nudge toward reflection, preventing accidental or impulsive decisions that the user might later regret.

Motion design serves as a powerful amplifier for these nudges by managing user attention over time. Static interfaces can be overlooked, but the human eye is biologically programmed to follow movement. Subtle animations, such as a gentle pulse on a “Complete Profile” button or a soft bounce when a new recommendation appears, draw focus without being intrusive. Research indicates that these motion-based cues can significantly increase task completion rates by making the intended path visually prominent. Furthermore, motion provides the feedback loops necessary for confidence. A ripple effect when a button is pressed or a checkmark that animates upon form validation reduces “error anxiety,” nudging the user to proceed with certainty.

Beyond directing attention, motion design helps manage the perceived effort of a task. Fluid transitions between states, like a list expanding into a detailed view, maintain spatial continuity. This prevents the disorientation that occurs with abrupt page jumps, effectively nudging the user to stay mentally engaged. Similarly, animated loaders and progress indicators tap into the “goal-gradient effect.” By visually demonstrating that a user is “almost there,” motion makes a wait feel shorter and nudges the user to finish the flow rather than abandoning it. Onboarding experiences frequently use these “teaching animations” to accelerate learning, making complex features feel intuitive and reducing the friction of the unknown.

However, the line between an ethical nudge and a manipulative “dark pattern” is defined by intent and transparency. For a nudge to be ethical, it must genuinely benefit the user, be transparent in its intent, and provide an easy way to undo the action. Motion becomes manipulative when it uses aggressive flashing to over-pressure a sale or distracts from critical privacy settings. Designers must define user-welfare goals upfront—such as helping a user secure their account—and then test these nudges with real users to ensure they are supporting autonomy rather than undermining it.

As we move deeper into the era of hyper-personalized interfaces, nudging will continue to evolve from a static design choice into a dynamic conversation between the system and the user. The most effective nudges are almost invisible; they feel like a helpful suggestion from a reliable partner. By combining the principles of choice architecture with the persuasive power of motion, we can create interfaces that respect the user’s time and intelligence while gently guiding them toward successful outcomes.

Sources:

EBSCO. (2024). Nudge theory: Economics research starter. https://www.ebsco.com/research-starters/economics/nudge-theory

MoldStud. (2023, November 15). The role of motion design in enhancing user experiences. https://moldstud.com/articles/p-the-role-of-motion-design-in-enhancing-user-experiences

Tuts+ Business. (2024, January 30). Dark patterns vs. nudging in UX design: Understanding the ethical line. https://webdesign.tutsplus.com/dark-patterns-vs-nudging-in-ux-design–cms-107582a

University of Chicago News. (2023, April 20). What is behavioral economics? https://news.uchicago.edu/explainer/what-is-behavioral-economics

Note: This text was developed with the assistance of artificial intelligence for research purposes and to refine the linguistic clarity and flow of the final draft.

The digital landscape has reached a point where a static interface feels like a broken one. We have become accustomed to a world that breathes, reacts, and communicates through movement. This shift represents the evolution of Motion UI and micro-interactions from decorative flourishes into essential functional strategies. Far from being “eye candy,” motion design is now the connective tissue of the user experience, bridging the gap between a series of disconnected screens and a cohesive, intuitive journey. By understanding the structural components of these interactions and the deep-seated psychology that drives our response to them, designers can create products that don’t just work, but feel alive and responsive.

Motion UI is the broad strategic use of movement to clarify a product’s information architecture, while micro-interactions are the small, single-task moments that occur within it, the bounce of a “like” button, the subtle ripple of a haptic touch, or the fluid transition of a loading bar. Every successful micro-interaction is built on a four-part framework: a trigger that starts the action, a set of rules that governs it, visual or haptic feedback that communicates the result, and loops that determine how the interaction persists. When these four elements are aligned, the interface feels natural and predictable.

The reason these movements are so effective lies in human psychology. Our brains are biologically hardwired to notice motion, a survival trait that now serves to guide our attention toward critical calls-to-action or away from errors. Beyond mere attention-grabbing, motion provides essential feedback loops that reduce user anxiety. When a button depresses or a sphere bounces, it serves as a non-verbal confirmation that the system has registered the user’s intent, preventing the frustration of duplicate clicks. Smooth transitions also ease our “cognitive load” by providing spatial context; as a window expands or a list slides, our brains understand exactly where the information came from and where it went, preventing the jarring “teleportation” effect of static page jumps.

However, with great power comes the need for great restraint. The guiding principle for 2026 remains “less is more.” Purposeful motion must indicate a state change or guide a user, never existing for its own sake. Consistency is vital; if a “submit” button slides in from the right, every similar confirmation should follow that visual logic to create a reliable language the user can learn. This requires careful attention to easing and timing. Linear movement feels robotic and unnatural, so designers utilize natural cubic-bezier curves to mimic real-world physics. Most micro-interactions should be brief, lasting between 300ms and 600ms—ensuring they provide feedback without slowing down the user’s workflow.

Performance is equally critical. An animation that stutters or drops below 60 frames per second is worse than no animation at all; it breaks the illusion of reality and signals a lack of quality. This technical demand has led to a shift in the tools we use. While Figma remains the industry standard for static design, tools like Framer and Rive have become the favorites for motion.

As we look toward the future, motion design is becoming increasingly intelligent. We are seeing the rise of AI-powered motion, where algorithms can suggest unique visual compositions and generate predictive animations based on a narrative input. This allows for “generative motion” that adapts to the user’s context in real-time. We are also seeing motion move into 3D spaces through AR and VR integration, making graphics interactive in three dimensions. Yet, despite these high-tech advancements, the most successful designs remain those that follow the benchmarks set by platforms like Apple’s iOS, where haptic feedback and subtle screen tilts feel so integrated that the user doesn’t even consciously realize they are being guided.

In essence, modern motion UI transforms a product from a tool into a partner. It humanizes digital interactions, forging emotional bonds through playful details like the “magnetic” hover effects on a card or the dancing dots of a voice assistant. When executed with precision and accessibility in mind, always respecting user preferences for reduced motion, it creates a seamless flow that guides, reassures, and delights. As the industry moves deeper into 2026, the key takeaway is that motion is no longer a luxury; it is the primary language through which we communicate a product’s reliability and brand identity.

Sources:

Interaction Design Foundation. (2024). Micro-interactions: Why details matter in UX design. https://www.interaction-design.org/literature/article/micro-interactions-ux

No Boring Design. (2024, March 12). 10 inspiring examples of micro-interactions in web design. https://www.noboringdesign.com/blog/10-inspiring-examples-of-micro-interactions-in-web-design

Pixel Orbis. (2024). Motion design in UI: A comprehensive guide for modern interfaces. https://pixelorbis.com/motion-design-ui-guide/

Pixso. (2023, November 20). The role of motion design in enhancing user experience. https://pixso.net/articles/motion-design/

Spiral Compute. (2024). Creating engaging web experiences with motion UI design. https://www.spiralcompute.co.nz/creating-engaging-web-experiences-with-motion-ui-design/

Note: This text was developed with the assistance of artificial intelligence for research purposes and to refine the linguistic clarity and flow of the final draft.

In the split second it takes for a landing page to load, a user has already made a profound decision: they have decided whether or not they trust you. This subconscious judgment happens in mere milliseconds, long before a single word of copy is read or a single feature is tested. In the digital landscape of 2026, where AI-generated content and frequent data breaches have made skepticism the default human setting, trust is no longer a “nice-to-have” quality that emerges over time. Instead, it must be an intentional, strategic, and measurable outcome. This is the core of Trust by Design, a framework that treats credibility not as an abstract feeling, but as a tangible asset built through deliberate UI and UX choices across every single touchpoint of the user journey.

To build trust by design, an organization must move past the idea that a “slick” interface is enough. True trust is constructed on six foundational pillars, beginning with radical transparency. Users today demand to know exactly what is happening behind the curtain. This means clearly stating how data is collected, using straightforward language, and being explicitly honest about when a user is interacting with an AI rather than a human. This transparency must be matched by a relentless commitment to consistency. When a button looks different on two different screens or a navigation pattern changes unexpectedly, it creates a “micro-friction” that signals a lack of professionalism. Consistency creates predictability, and predictability is the bedrock of confidence; when a user can accurately anticipate how a system will behave, they feel safe enough to engage more deeply.

Of course, this emotional safety must be backed by visible security. It isn’t enough for a site to be secure; it must look and feel secure. This involves more than just implementing HTTPS or multi-factor authentication; it requires the thoughtful placement of trust signals like security badges and clear status indicators that reassure the user at the exact moment they are asked to share sensitive information. This visual reassurance is tied to the broader aesthetic of the product. Clean, uncluttered layouts and high-quality, authentic imagery, real faces rather than polished, generic stock photos, signal that a company is established and reliable. A clear visual hierarchy doesn’t just look good; it reduces the cognitive load on the user, showing that the designers value the user’s time and mental energy.

Beyond the visuals, trust is reinforced by how much control the user feels they have over the experience. Empowerment is a powerful trust-builder. Systems that provide “undo” functions, offer clear confirmation dialogs before destructive actions, and give users granular control over their notifications treat the user as a partner rather than a target. This sense of agency is often cemented in the smallest moments, known as micro-interactions. A progress bar that moves steadily during a checkout process, or a real-time validation checkmark that appears as someone types their email address, provides immediate feedback that the system is responsive and attentive. These small “success beats” reduce anxiety and transform a cold, transactional interface into a living, helpful presence.

Implementing a Trust by Design framework requires a systemic shift in how teams work. It starts with deep empathy mapping to understand where users feel most vulnerable, followed by a data-driven approach to identify specific “trust barriers” in the current funnel. These insights must then be translated into design principles that guide every pixel and every line of code. It is a cumulative process; while trust takes thousands of successful interactions to build, it can be shattered by a single hidden fee, a broken link, or a deceptive “dark pattern.” In 2026, as we navigate a world of automated content and heightened data consciousness, Trust by Design is the ultimate differentiator. It transforms the user’s experience from a risky gamble into a reliable partnership, turning fleeting clicks into long-term, meaningful relationships.

Sources:

Note: This text was developed with the assistance of artificial intelligence for research purposes and to refine the linguistic clarity and flow of the final draft.

We often hear that “attention is the new currency,” but beneath that metaphor lies a much more literal biological reality: the management of the human dopamine system. As users, we have all felt the strange, compulsive pull of the “infinite scroll” or the sudden jolt of anticipation when a red notification bubble appears on our home screen. These experiences are rarely the result of accidental design; they are the product of dopamine-sensitive UX patterns. These mechanisms are specifically engineered to exploit our brain’s reward system, creating powerful engagement loops that can lead to habitual checking, mindless scrolling, and a significant loss of personal autonomy. While these tactics are highly effective at boosting engagement metrics, they represent a growing ethical challenge for designers who must decide whether they are building tools for empowerment or engines for behavioral addiction.

To understand why certain apps are so hard to put down, we have to understand what dopamine actually does. Contrary to popular belief, dopamine isn’t just about feeling pleasure; it is a neuromodulator deeply involved in learning and motivation. It essentially tells the brain, “This is worth doing again.” UX patterns that pair small, low-effort actions, like a thumb-swipe or a tap, with unpredictable rewards train our brains to seek out digital stimulation. Whether it is the social validation of a “like,” a clever animation upon refreshing a feed, or a perfectly timed recommendation, these rewards trigger dopamine responses that reinforce the behavior. Over time, this slides from intentional use into compulsive checking, as the brain begins to prioritize these “quick hits” over more effortful, meaningful tasks.

Dopamine-driven design is filled with features that have become industry standards. The infinite scroll and autoplay are perhaps the most pervasive, as they intentionally remove “stopping cues”, those natural break points that force a user to ask, “Do I want to keep doing this?” Without a “next page” button or a pause in the video, the conscious decision-making process is bypassed entirely. This is often combined with variable rewards. Because we don’t know if the next post will be a boring ad or a hilarious video from a friend, the act of scrolling becomes a gamble, making the behavior incredibly difficult to extinguish. Social validation loops further intensify this by tying our dopamine levels to our social status; every follower count or reaction serves as a metric of approval that demands constant monitoring.

When these patterns are used aggressively, they are increasingly categorized as a specific type of Dark UX: addictive design. These interfaces often operate below our conscious awareness, leading people to attribute their digital overconsumption to personal weakness or a lack of willpower, rather than recognizing that they are being nudged by an interface designed to be “sticky.” Features like “engineered urgency”, where UX copy screams “Only 2 left!” or “Offer ends soon”, intensify these impulses, creating a state of FOMO that makes it psychologically costly to disengage. This constant state of anticipation and reward-seeking can eventually erode our “dopamine household,” leading to increased stress, anxiety, and a diminished capacity for deep focus.

However, the tide is beginning to turn. Ethical design teams are realizing that while dopamine-driven loops create short-term spikes in “time-in-app,” they often lead to long-term user burnout and brand resentment. The path forward involves a deliberate rebalancing of incentives, shifting the focus from passive consumption to intentional use. One of the most effective strategies is to restore stopping cues. Instead of an endless feed, platforms can introduce “You’re all caught up” markers or pagination that requires an active “Show more” click. Similarly, making notifications user-driven rather than metric-driven, such as batching non-urgent alerts or allowing for granular “do not disturb” settings—can reduce the urge for compulsive checking.

Designers also have the power to introduce “mindful friction.” This might seem counterintuitive in a field that usually prizes “seamless” experiences, but small hurdles can be beneficial. For example, a prompt that asks, “You’ve been scrolling for 30 minutes, do you want to take a break?” or requiring a confirmation before entering a known “rabbit hole” of content can bring the user’s conscious mind back into the loop. Furthermore, gamification doesn’t have to be manipulative; streaks and badges can be tied to user-centered goals like health, learning, or creativity, provided they offer a “vacation mode” so users aren’t coerced into engagement through the fear of losing progress.

Ultimately, the shift toward ethical UX requires a change in how we define success. If a platform’s only metric is “seconds spent on screen,” dark patterns are inevitable. But if we incorporate user well-being, satisfaction, and intentionality into our KPIs, the design naturally follows a more respectful path. As you audit your own work, ask yourself: Does this feature rely on unpredictable rewards? Am I making it harder to leave than to stay? Could a tired or impulsive person be easily manipulated here? If the answer is yes, it’s a signal to redesign. By moving away from exploitation and toward autonomy, we can create digital environments that respect the human mind rather than just harvesting its attention.

Sources:

Gori, A. (n.d.). Why the infinite scroll is so addictive: Insights from behavioral psychology. GoriUX. https://goriux.com/ux/why-the-infinite-scroll-is-so-addictive-insights-from-behavioral-psychology/

Pila. (2024, January 15). Designing for dopamine: UX patterns and user behavior. https://blog.pilapk.com/it/designing-for-dopamine/238/

Stafford, T. (n.d.). Designing for dopamine. UX Magazine. https://uxmag.com/articles/designing-for-dopamine

Weizenbaum Journal of the Digital Society. (2023). The ethics of dopamine-driven design: Behavioral patterns in digital interfaces. https://ojs.weizenbaum-institut.de/index.php/wjds/article/view/5_3_2/189

Xientory. (2025, June). Dopamine reward design techniques in UX: Creating sustainable engagement. https://www.xientory.com/2025/06/dopamine-reward-design-techniques-in-ux.html

Note: This text was developed with the assistance of artificial intelligence for research purposes and to refine the linguistic clarity and flow of the final draft.

The line between helpful guidance and manipulation has become increasingly blurred. We have all experienced it: the subscription that takes seconds to join but requires a marathon of phone calls and hidden links to cancel, or the “limited time offer” with a countdown timer that magically resets every time the page is refreshed. These are not accidental design flaws; they are known as Dark UX Patterns. These deceptive design tactics are engineered to exploit our psychological vulnerabilities, nudging us into making decisions that serve a company’s bottom line rather than our own best interests. While they might generate quick profits, they are increasingly becoming a liability for brands that value long-term loyalty and regulatory safety.

The concept of dark patterns was first identified in 2010 by UX specialist Harry Brignull, and since then, it has grown into a sophisticated science of digital coercion. Unlike ethical design, which aims to make user journeys smoother, dark patterns deliberately subvert user autonomy. They modify the “decision space” by obscuring important information or using confusing language to steer behavior. This practice is now nearly ubiquitous. Recent data shows that roughly 76% of subscription websites globally use at least one dark pattern, and in the world of mobile apps, a staggering 97% of popular platforms incorporate some form of deceptive design. From e-commerce giants like Amazon and Shein to streaming services like Netflix and Hulu, these tactics have become a standard, if ethically questionable, part of the digital toolkit.

These patterns thrive because they are built upon the very foundations of human psychology. Dark patterns exploit “cognitive biases”, the mental shortcuts our brains use to process information quickly. For instance, the “Default Effect” takes advantage of our tendency to accept pre-selected options; if a “Receive Marketing Emails” box is already checked, most users won’t exert the effort to uncheck it. Similarly, “Drip Pricing” exploits the sunk cost fallacy. By revealing taxes and hidden fees only at the final stage of a purchase, companies bank on the fact that the user has already invested too much time and emotional energy to back out. Other tactics, like “Confirmshaming,” use emotional manipulation to make users feel guilty for opting out, replacing a neutral “No thanks” with phrases like “No, I don’t want to save money.”

However, the short-term conversion boost provided by these tricks comes at a heavy price. Research confirms that dark patterns cause genuine psychological distress, with EEG measurements showing elevated stress markers in users navigating “hard to cancel” flows. Beyond the immediate frustration, there is a profound erosion of trust. Over half of consumers report losing trust in a platform after encountering manipulative design, and nearly 43% will stop purchasing from a retailer entirely. For vulnerable populations, such as children or the elderly, these patterns are even more predatory, often leading to financial harm and compulsive behavior.

The business case for dark patterns is also crumbling under the weight of new regulations. We are entering an era of “long-term catastrophe” for companies that refuse to adapt. In the past year, international authorities have moved from warnings to massive enforcement. In 2024 and 2025, regulators in the EU, the US, and South Korea began levying fines that can reach up to 10% of a company’s global turnover. Amazon’s recent $2.5 billion settlement over its deceptive Prime cancellation process serves as a landmark warning: the “Roach Motel” model, where it’s easy to check in but impossible to leave, is now a legal and financial liability.

Ultimately, the most successful companies in the coming years will be those that embrace “Fair UX.” While dark patterns might offer a 5% to 30% lift in immediate conversions, transparent and respectful design proves more profitable in the long run. Fair design attracts users who stay because they want to be there, not because they were tricked into a subscription they can’t find the “exit” button for. As consumer awareness grows and the law tightens its grip, the choice for businesses is clear: prioritize the quick click and risk everything, or invest in the long-term trust that fuels sustainable growth.

Sources:

AcoWebs. (2024, April 24). Dark patterns in e-commerce: How they manipulate consumers. https://acowebs.com/dark-patterns-ecommerce/

Didomi. (2023, July 5). What are dark patterns? Definition, examples, and regulations. https://www.didomi.io/blog/what-are-dark-patterns

UX Psychology. (2023, June 12). Dark patterns: Using human psychology to manipulate users. https://uxpsychology.substack.com/p/dark-patterns-using-human-psychology

Note: This text was developed with the assistance of artificial intelligence for research purposes and to refine the linguistic clarity and flow of the final draft.