Eye-tracking sounds deceptively simple: you track where someone looks, and suddenly you “understand” perception. Unfortunately (or fortunately, depending on your tolerance for complexity), it is not that straightforward. There isn’t just one way to track eyes. There are multiple methods, each producing different kinds of data, each shaping what you think you are observing. So before interpreting gaze behaviour, it is essential to understand how that gaze was captured in the first place.

1. Screen-Based Eye-Tracking

Screen-based eye-tracking represents one of the most established and controlled methods for analyzing visual attention. Often referred to as the “lab classic,” it provides a highly precise way of measuring how users engage with visual content on a screen. In this setup, participants are positioned in front of a stationary device that tracks their eye movements while they observe a digital stimulus. This could be anything from a website interface to a poster layout or typographic composition. The controlled environment allows researchers to isolate visual variables and focus specifically on how attention is distributed across a given design.

Technically, the system operates through infrared light, which is projected onto the eye and reflected off the cornea. Cameras capture this reflection and calculate the direction of the gaze. This data is then mapped onto a two-dimensional surface, allowing researchers to reconstruct exactly where and for how long a participant looked at specific elements within the design. The strength of this method lies in its precision. It allows for detailed analysis of visual behavior, making it particularly suitable for areas such as graphic design, UI and UX interfaces, as well as typography and layout studies. Designers can identify whether key elements such as headlines, images, or navigation structures are effectively capturing attention, or whether they are being overlooked entirely.

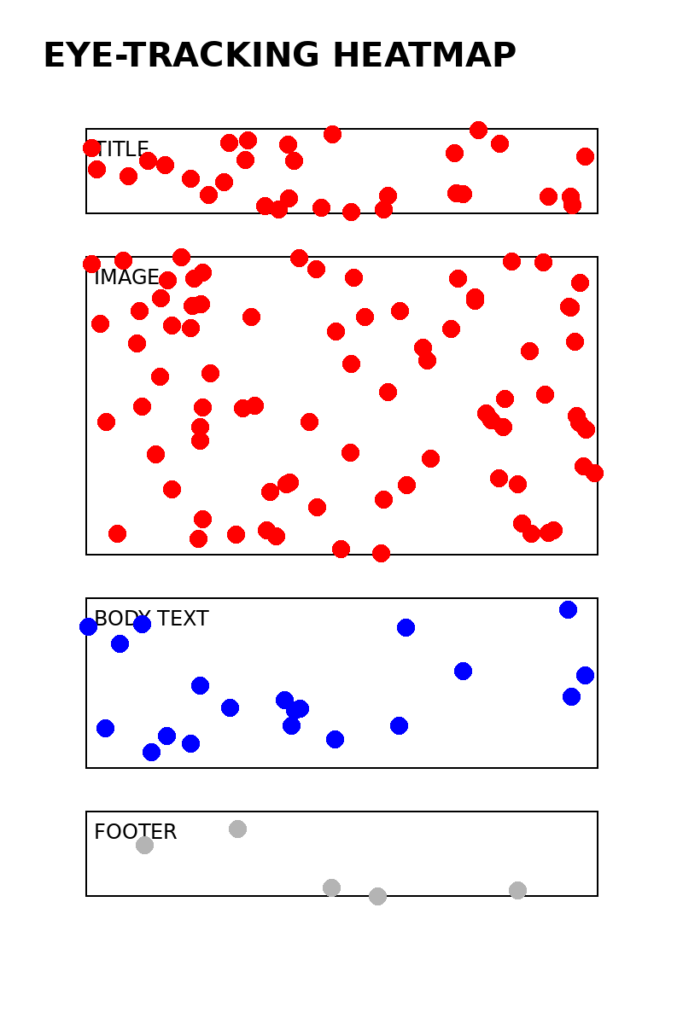

However, this level of control also introduces certain limitations. The artificial nature of the lab setting reduces real-world context. Participants remain stationary, and natural body movement is restricted. As a result, the findings reflect focused screen-based interaction rather than more dynamic, environmental forms of perception. To visualize how this data is typically interpreted, heatmaps are often used. These maps translate gaze data into areas of intensity, highlighting where attention is concentrated and where it fades.

In this example, the viewer’s gaze is primarily drawn to the image and title, while the body text receives significantly less attention. The footer is nearly ignored. This kind of visualization makes it immediately clear how hierarchy, scale, and placement influence perception. To extend this further into a more dynamic visualization, gaze sequences can also be mapped as a path across the layout:

START → Title → Image → Image → Text → Image → (exit)

This sequence reflects not just where attention occurs, but how it moves over time. It reveals patterns such as repeated focus on dominant elements or skipping behavior across less engaging areas. Ultimately, screen-based eye-tracking becomes a tool for making the invisible visible. It translates subconscious visual behavior into measurable data. If the goal is to understand whether a design communicates effectively at first glance, or whether certain elements fail to engage, this method offers a direct and reliable way of observing that interaction.

It answers a very specific question with clarity: where do people actually look?

2. Mobile Eye-Tracking Glasses

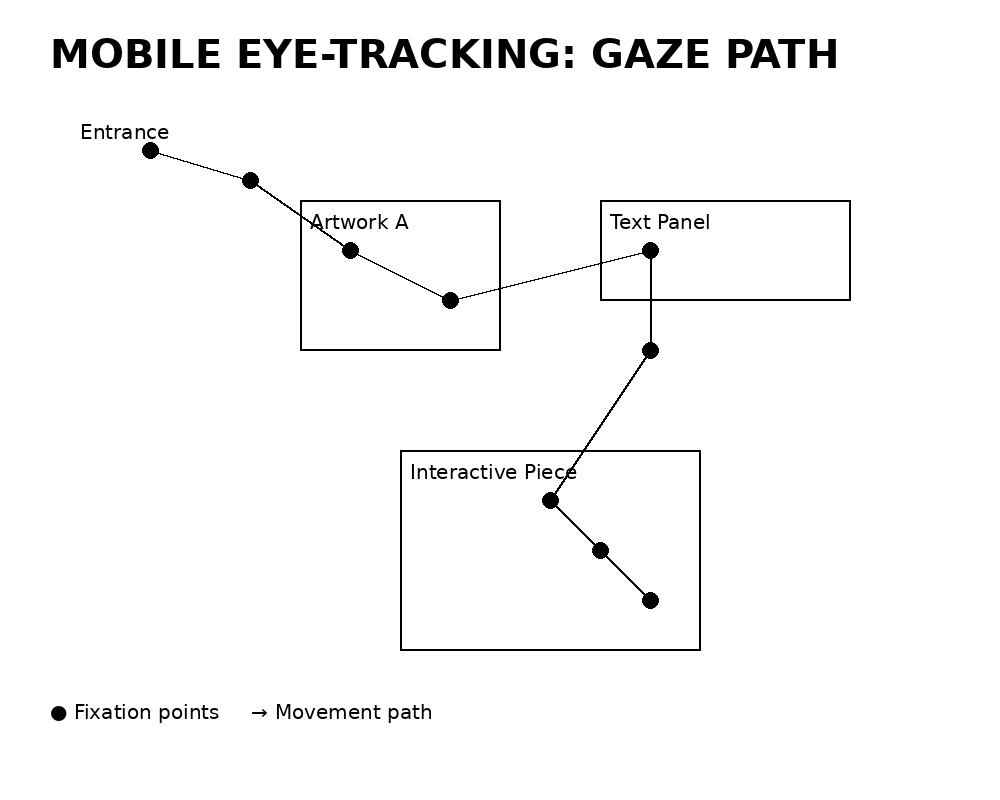

Mobile eye-tracking glasses represent a shift from controlled laboratory conditions to real-world observation. Instead of viewing static stimuli on a screen, participants move freely through physical environments while their gaze behavior is recorded in real time.In this setup, participants wear lightweight glasses equipped with multiple cameras. A forward-facing scene camera captures the surrounding environment, while inward-facing eye cameras track pupil movement. The collected data is then combined, allowing gaze points to be overlaid onto a continuously moving field of view. This method is particularly well suited for contexts where spatial interaction plays a central role. These include exhibitions, spatial design environments, wayfinding systems, and retail spaces. Unlike screen-based setups, it captures how attention unfolds dynamically in relation to movement, orientation, and environmental context. However, this increased realism comes with certain trade-offs. Compared to stationary eye-tracking systems, the level of precision is lower. The data itself is also more complex to analyse, as it combines movement, shifting perspectives, and changing visual stimuli. In addition, calibration requires careful setup and can introduce variability into the results. To visualize this type of data, gaze paths are often mapped within a spatial layout.olds in space and time, not just on a flat surface.

3. Fixation Mapping vs. Gaze Plotting

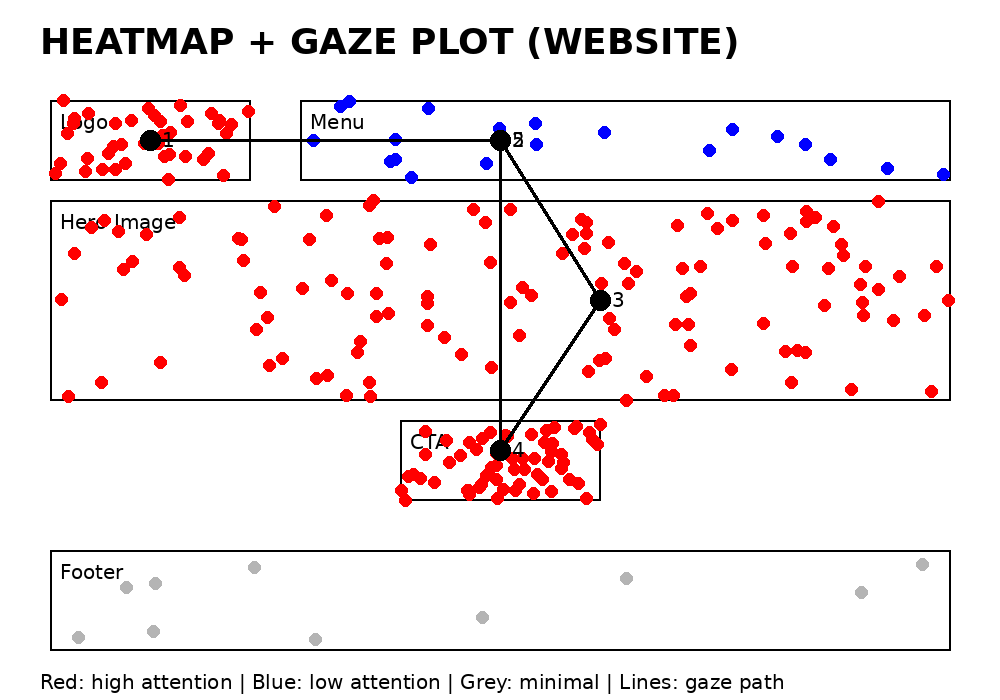

Once eye-tracking data has been collected, the focus shifts from observation to visualization. The central question becomes how this data can be translated into a format that reveals meaningful patterns of visual attention. Two primary methods are commonly used for this purpose: fixation maps and gaze plots. Fixation maps, often referred to as heatmaps, display the intensity of attention across a visual surface. They aggregate data from multiple users and highlight areas where gaze is concentrated over time. Warmer zones indicate higher levels of attention, while cooler areas suggest that elements are either overlooked or only briefly viewed. This type of visualization is particularly useful when evaluating the overall effectiveness of a design, as it reveals which components consistently attract attention across a group of participants. In contrast, gaze plots, also known as scanpaths, focus on the sequence of eye movements. Instead of showing intensity, they map the order in which visual elements are explored. Individual fixations are numbered, and connected by lines that represent the movement of the gaze from one point to the next. This allows for a more detailed understanding of how a design is navigated over time.issing structure or missing behaviour.

In this representation, the numbers indicate the order of attention, while the connecting lines illustrate the direction of gaze movement. Each method answers a different question. Heatmaps reveal what is seen, highlighting areas of focus and neglect. Gaze plots, on the other hand, explain how attention unfolds, showing the path users take as they move through a design.

For a comprehensive understanding of visual behavior, both approaches are necessary. Heatmaps provide an overview of attention distribution, while gaze plots introduce temporal structure. Without one, the analysis remains incomplete, either lacking insight into sequence or missing the broader patterns of engagement.

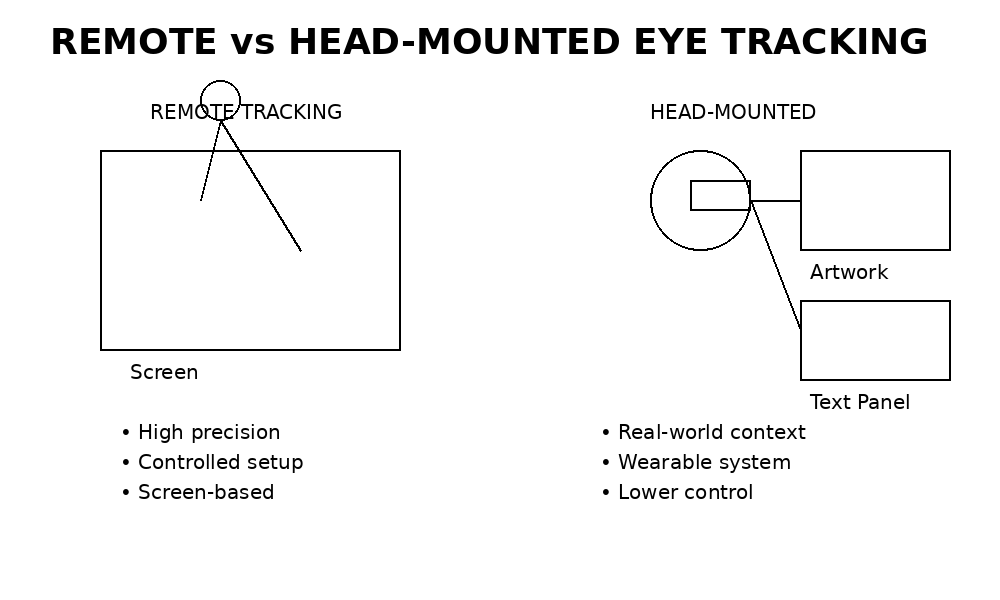

4. Remote vs. Head-Mounted Tracking

A fundamental distinction in eye tracking lies between remote systems and head mounted approaches, each offering different advantages depending on the research context. Remote eye tracking is non intrusive and typically screen based. Participants remain stationary while their gaze is recorded with a high level of precision. This setup allows for controlled conditions, making it particularly effective when detailed and accurate measurements are required. In contrast, head mounted eye tracking involves wearable devices that participants use while moving through real world environments. This approach captures visual behavior within natural contexts, where movement, surroundings, and situational factors influence perception. While this increases ecological validity, it also reduces the level of control and precision compared to lab based systems.

Understanding this distinction is essential when designing experiments. A poster observed in a controlled laboratory setting may produce very different results than the same poster experienced within a crowded exhibition space, where distractions, movement, and environmental complexity shape how attention is distributed.

Choosing the Right Method (or: It Depends, Obviously)

Each method answers different questions:

| Method | Strength | Best For |

| Screen-based | Precision | UI, graphic design |

| Mobile glasses | Real-world behaviour | Exhibitions, spatial design |

| Gaze plots | Sequence of perception | Understanding navigation |

| Dynamic tracking | Temporal behaviour | Motion design, video |

Final Thought

Eye-tracking does not show you everything. It shows you where attention is directed, which is only one layer of perception but a crucial one. And perhaps the most important realisation: The method you choose does not just measure reality it frames it.

Sources:

- Holmqvist, K. et al. (2011) Eye Tracking: A Comprehensive Guide to Methods and Measures. Oxford University Press.

- Duchowski, A.T. (2017) Eye Tracking Methodology: Theory and Practice. Springer.

- Wedel, M. & Pieters, R. (2008) Eye Tracking for Visual Marketing. Foundations and Trends in Marketing.

- Poole, A. & Ball, L.J. (2006) Eye tracking in human-computer interaction and usability research.

- Goldberg, J.H. & Wichansky, A.M. (2003) Eye tracking in usability evaluation: A practitioner’s guide.

- Holmqvist, K. & Andersson, R. (2017) Eye Tracking: A Comprehensive Guide to Methods and Measures (2nd ed.).