The previous blog focused primarily on workshops rooted in neuroscientific research. The following three delve into digital signal processing, visual generation from audio and dynamic spatialisation. Each of these highlights features I am planning to integrate into my personal project.

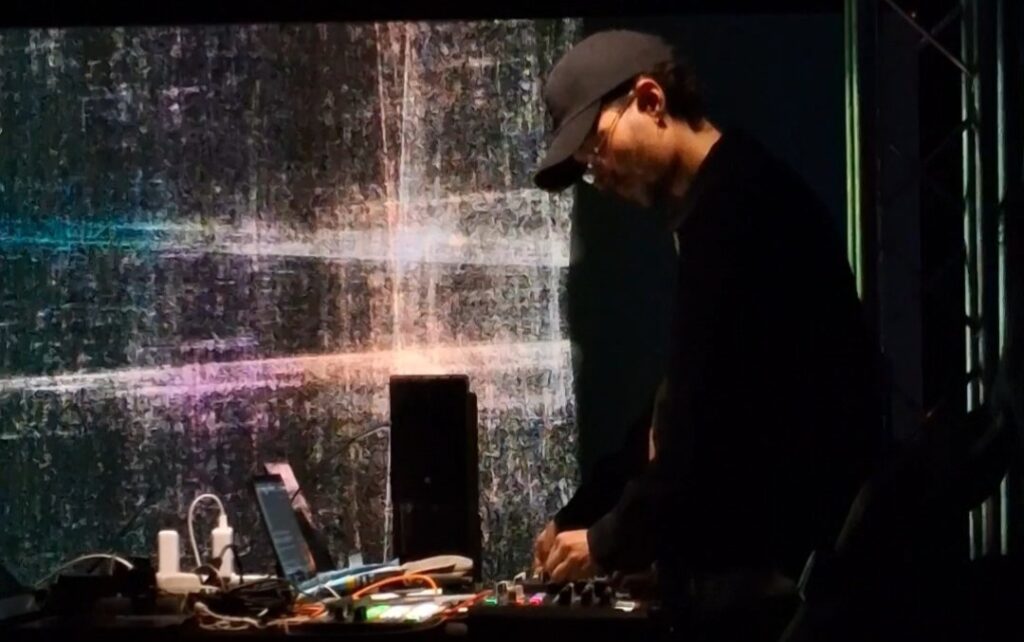

Moving with Time is an immersive audiovisual performance exploring the evolving relationship between sound, image, and culture through real-time interaction. Amongst all the projects I have seen and been a part of during the entirety of the workshop, this particular performance had the closest semblance to my research project.

Some of the common processes this project shares with the research project include real-time digital signal processing, transforming sound and modifying it in real time. They had used granular and concatenative synthesis in Max/MSP to achieve this. I am still unsure whether to include this in my project, but I would definitely implement an interactive feature for users to stay engaged with the installation. Another common element present in both projects is real-time visual generation, made using TouchDesigner.

Speaking about the performance, I personally really enjoyed the fusion of music styles from the traditional Persian Setar to the warm textures of the ARP Odyssey and the Monotribe, it progressively builds and resolves eloquently.

Generative Music from the Quantum World

I could have never imagined using quantum mechanics to make music until Jean-Claude Heudin had talked about it. In this fascinating presentation, we were introduced to ANGELIA, a hybrid generative AI developed to enhance the creativity of the artist for composing, and to augment performative capabilities.

Heudin briefly explained some fundamental concepts of quantum mechanics like superposition, entanglement and measurement (or collapse). He also mentioned how qubits that exist in superposition states, can be subjected to incompatible measurements, and even be entangled with other qubits.

We were also introduced to Qunotes, which are like qubits but with musical notes instead of binary information. The quantum state of a qunote is represented by a linear superposition of a defined number of pitch values. It’s only when the piece of quantum music is played that a qunote collapses into a defined state. Each time the same qunote plays, a different note is obtained depending on the probability amplitudes. This results in many possible interpretations of the same score. Therefore, the composing is done with probability amplitudes instead of fixed notes.

Dynamic Spatial Mixing for Multi-Channel Audio

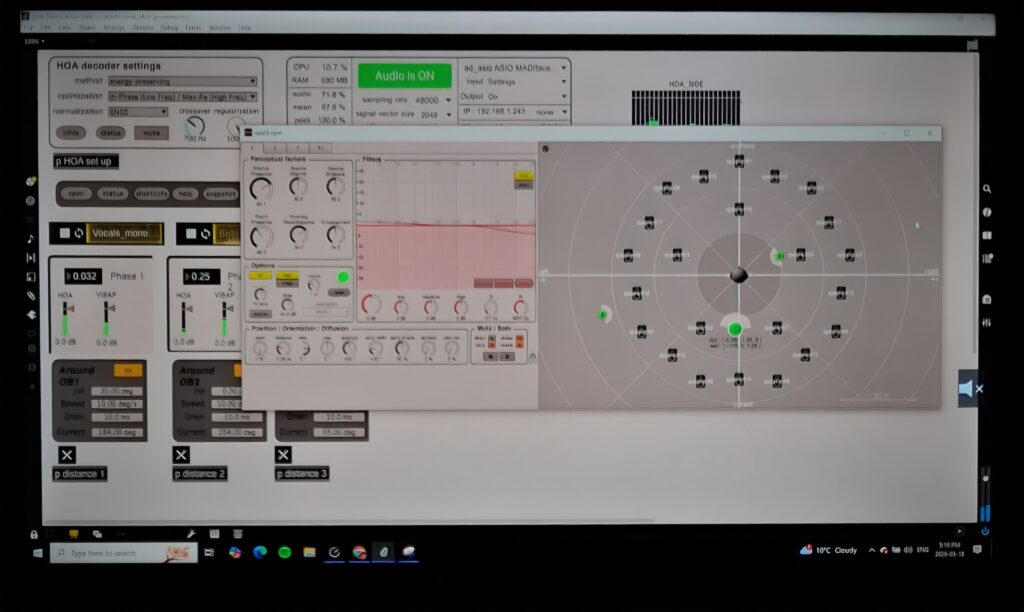

I was drawn to this project due to our course this semester (Surround Sound and spatialization). I also wanted to know more about spatial rendering and how to implement this in a mixing environment. This live demo introduces a system for dynamic spatialization and mixing in multi-channel environments using Max/MSP and IRCAM’s SPAT library. The framework combines adaptive spatial rendering with dynamic mixing to create an evolving, responsive sonic field.

It is a unique approach to spatialisation as they combined higher order ambisonics and VBAP to achieve a unified spatial rendering system.The term they used to describe this process is ‘sidechaining’, similar to the one we use in mixing, as audio objects dynamically influence one another and interact with static beds, generating shifting amplitude and frequency relationships and establishing priority-based behaviour between moving and static spatial elements. A parallel binaural rendering was implemented into the system for headphone users as well.

Overall, I found all the sessions at the IRCAM Forum very insightful. There was a diverse mix of topics and workshops hosted by artists, performers, researchers, engineers and so on. I was able to gather a lot of interesting ideas and got introduced to some really cool concepts, some of which I would attempt to apply to my research project.