What Problem are you solving?

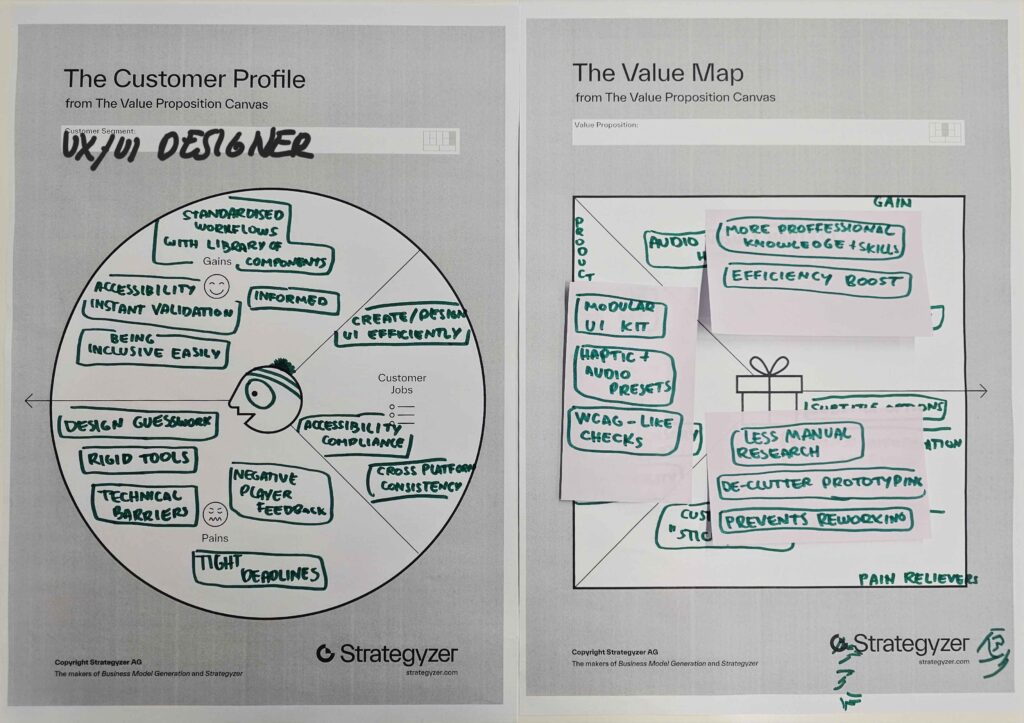

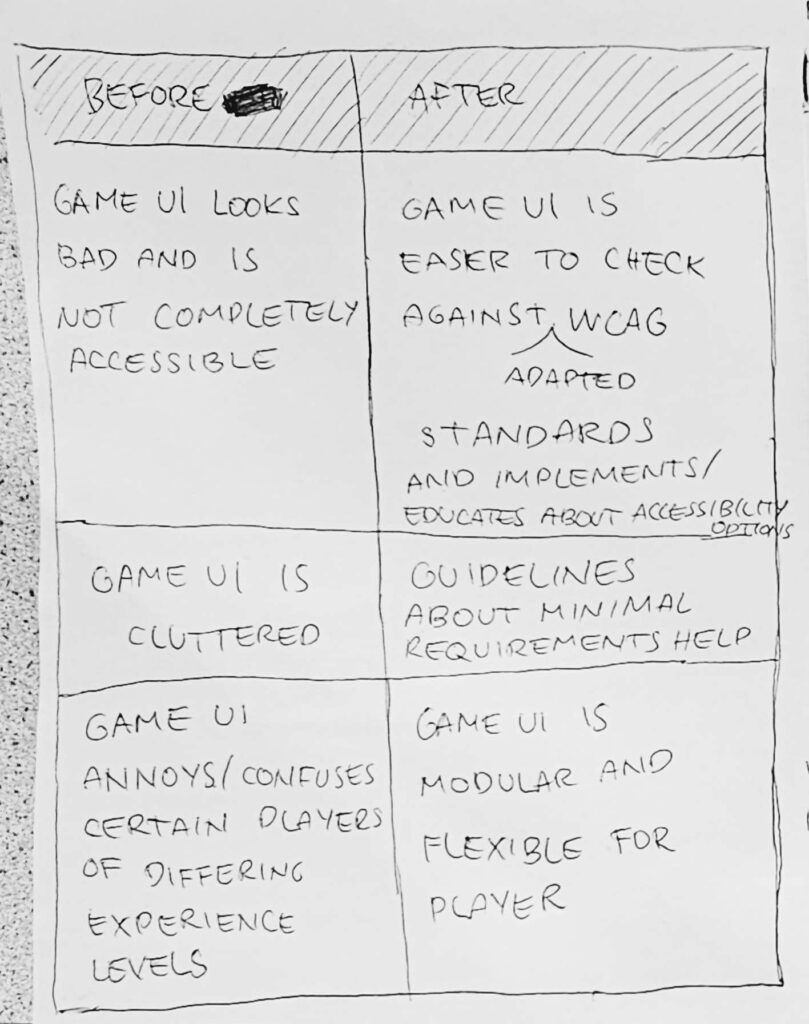

Most game interfaces are static and cluttered, creating massive legibility and navigation barriers for visually impaired players. Designers often lack the time or specialized tools to implement complex WCAG-like standards and multi-sensory feedback.

Why should we care about it?

Accessibility isn’t just a “feature”, it is a fundamental right to play. When games like Black Myth: Wukong launch with unreadable text, it excludes millions of players and hurts the game’s reputation and reach.

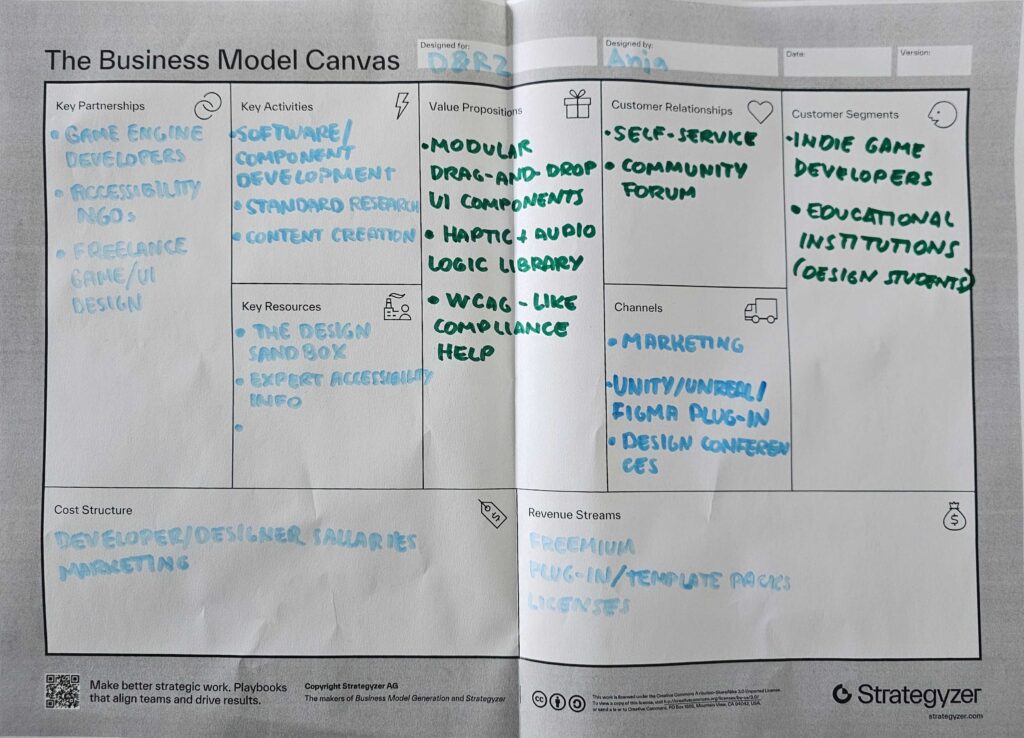

What is the solution? How does it work?

An interactive design engine that acts as a “Canva for Game UI.” It allows designers to drag-and-drop modular HUD elements and automatically checks them against accessibility rules while providing a library of haptic and audio logic.

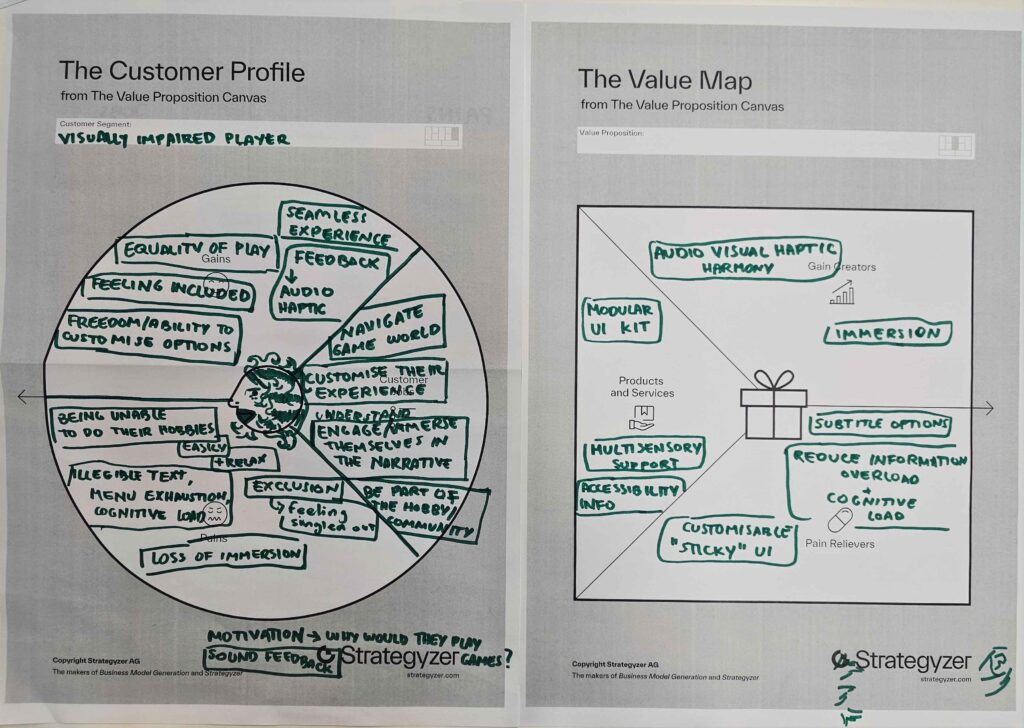

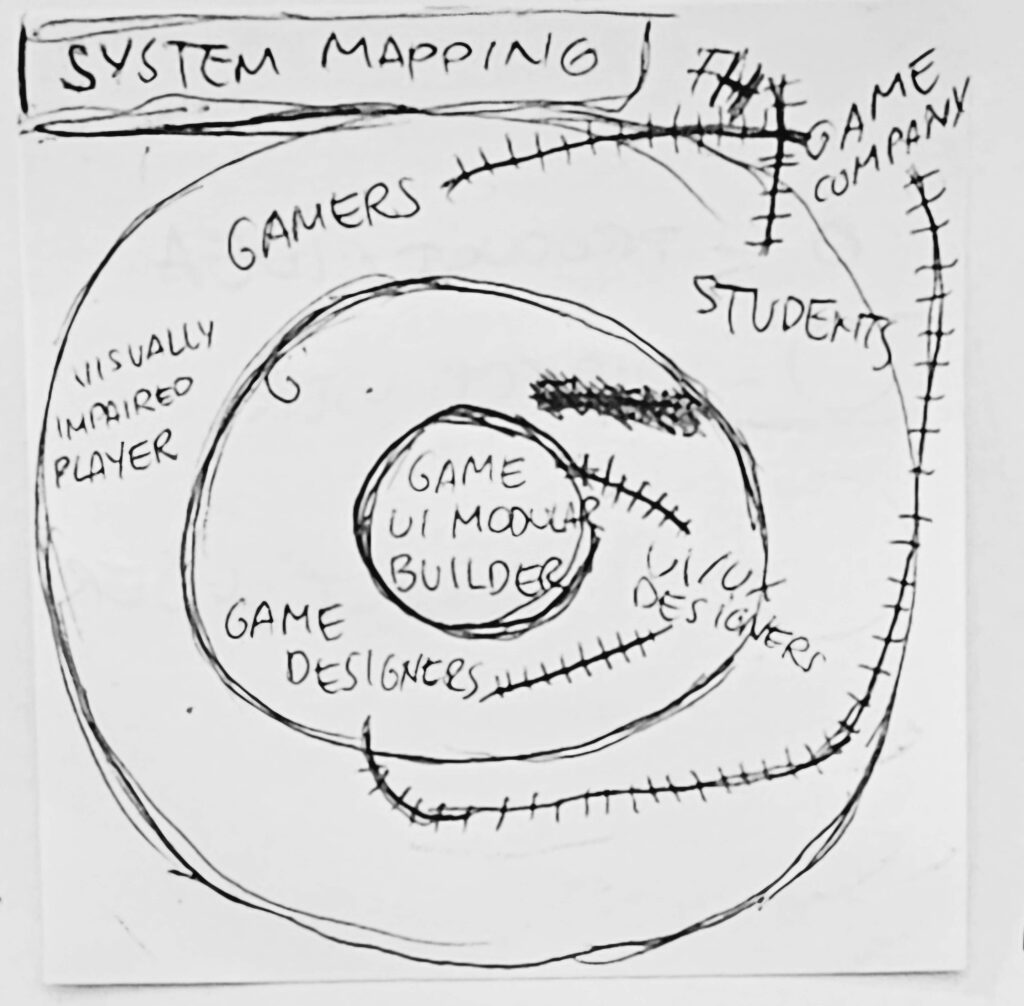

Who is the target audience/customer?

The target audience is the visually impaired gaming community who needs better tools to play. The paying customer is the UX/UI Designer and Game Studio looking to streamline their workflow and meet professional standards.

What is going to happen? (Change & Impact)

We will move from a “one-size-fits-all” UI to a Modular Era where games are playable by everyone from day one. This shift reduces “design guesswork” for studios and replaces frustration with independence and mastery for players.

Bonus: How can this make money?

The platform will operate on a Freemium SaaS model, offering a free basic toolkit for students and indies, while charging Enterprise Subscription fees to large studios for advanced simulation tools and custom haptic libraries.