Design & Research | Master Thesis Log 06

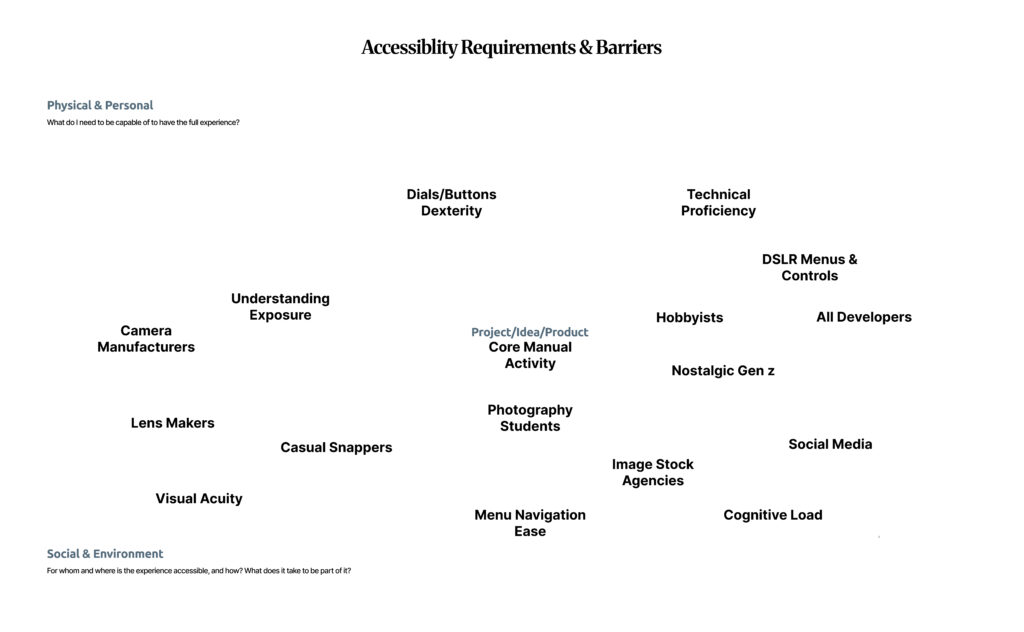

For the last few weeks, I have analyzed photography through the lens of philosophy. But as an Interaction Designer, I need to understand the user.

This week, I interviewed two distinct photographers. My goal was to investigate a core design problem: When the machine (AI) takes control of the image, do we lose the art?

The data I collected was surprising. One photographer sees a new evolution of tools, while the other sees a moral battle for truth.

Perspective 1: The Modern Realist

The first subject is a working digital photographer who uses modern tools. In our discussion, we talked about features like Generative Expand—where AI creates the background for you.

For him, this isn’t about “faking” reality; it’s about utility. He explained that sometimes you don’t have the budget for a studio or the right location, so the AI helps you “fix” the background. He is willing to give up control of those pixels to solve a problem.

Insight 1: Defining Control through “Manual Mode”

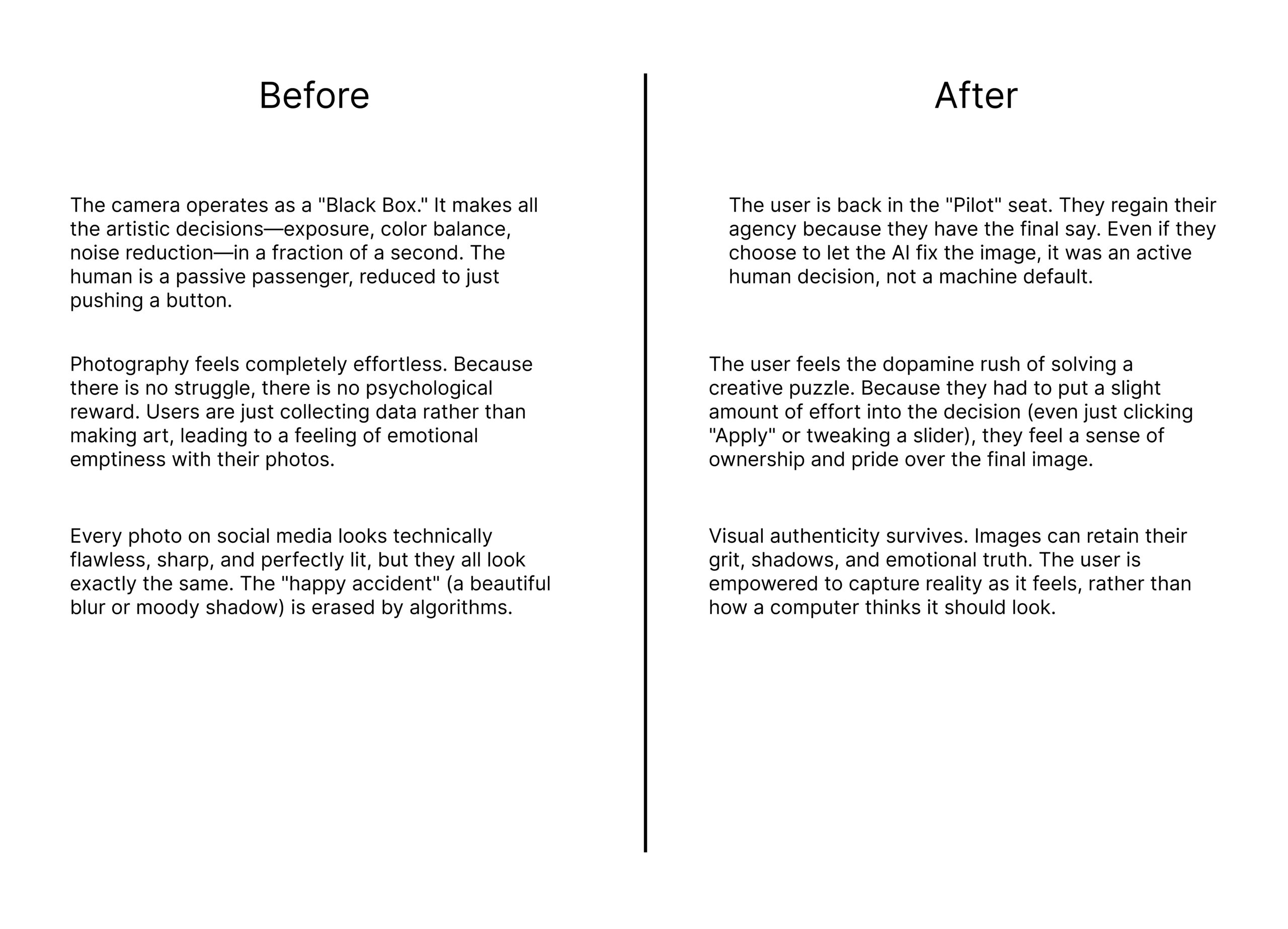

I pushed him on the question of Agency: If the camera is digital, is the computer doing the work?

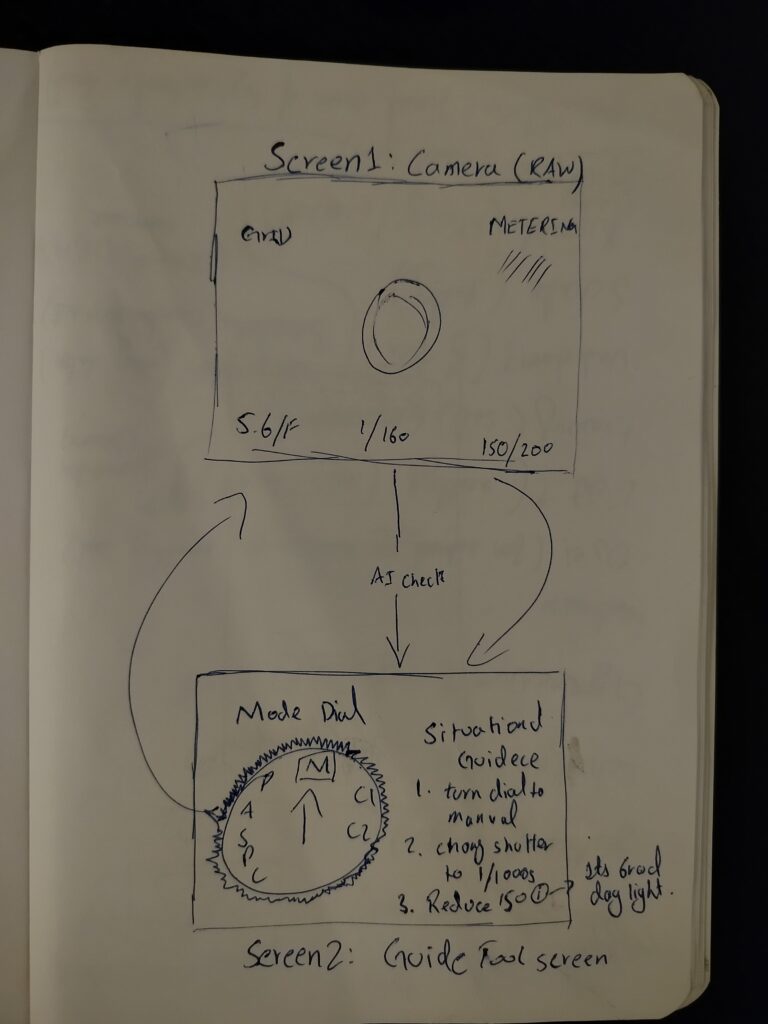

He clarified a crucial distinction across several of his answers. For him, the human is absolutely still in control, provided one condition is met: Manual Settings.

He emphasized that as long as the photographer is manually managing the technical variables—White Balance, Shutter Speed, Aperture, ISO—the human is the “Pilot.” Even if the image is digital, the decisions are human.

This is a vital finding for my thesis. It suggests that for digital natives, “Agency” is located in the Settings Menu, not the film roll.

Insight 2: The Uncertainty of Future Control

However, he admitted that as AI improves, this balance might shift. He expressed a real uncertainty about where the line will be drawn in the future:

“I don’t think it will ever die honestly it’s a form of art that’s been around forever I think it may change in ways I hope it doesn’t get so reliant on AI but who truly knows.”

Insight 3: The “Digital is the New Film” Theory

Then, he offered a profound prediction. He believes that the definition of “Authenticity” is about to shift. Just as Film became the “vintage” alternative to Digital, he believes standard Digital cameras will become the “Authentic” alternative to AI:

“I think you will always have it around even if one day ai takes over you will have those who will still shoot film and those who will use digital as the new form of film vs AI which scary to think about but true“

The Analysis:

This suggests that “Agency” is relative. In 2030, holding a digital camera and manually setting the White Balance might be seen as the ultimate act of human control, because it proves a human was there.

Perspective 2: The Purist (Total Agency)

On the other side, I interviewed a legend in the New York film photography scene. He is known for capturing the “Madness” of NYC—raw, unedited, and chaotic.

I asked him if the perfection of AI images offends him. His answer ignored the utility argument entirely. He focused strictly on Value.

“I ignore it. Work done by a human will always be worth more”

He believes that the “Apparatus” (the machine) cannot create value. Only human labor creates value. When I asked if the public will eventually be fooled by the shiny look of AI, he gave a final verdict:

“The truth always prevails”

The Analysis:

For the Purist, “Control” is binary. You either have it, or you don’t. He refuses to let the AI fix his backgrounds or clean up his noise, because those imperfections are where the “Truth” lives.

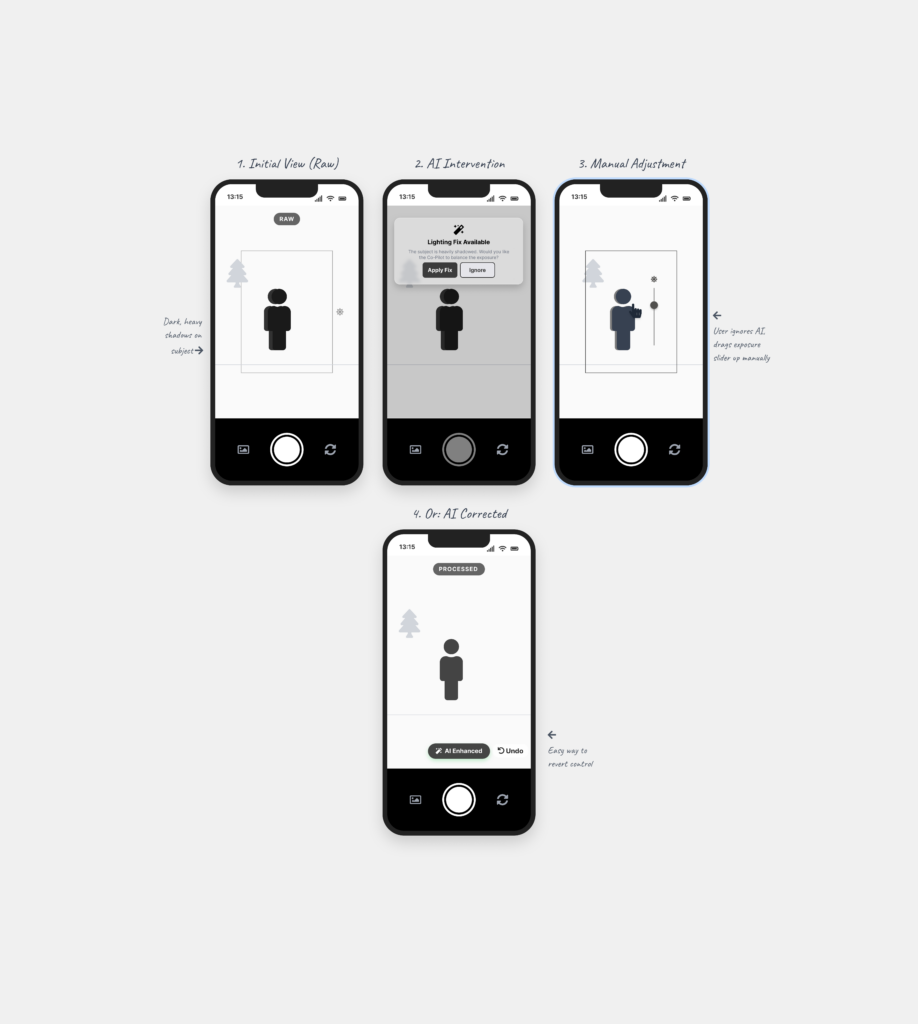

Refining the Design Problem:

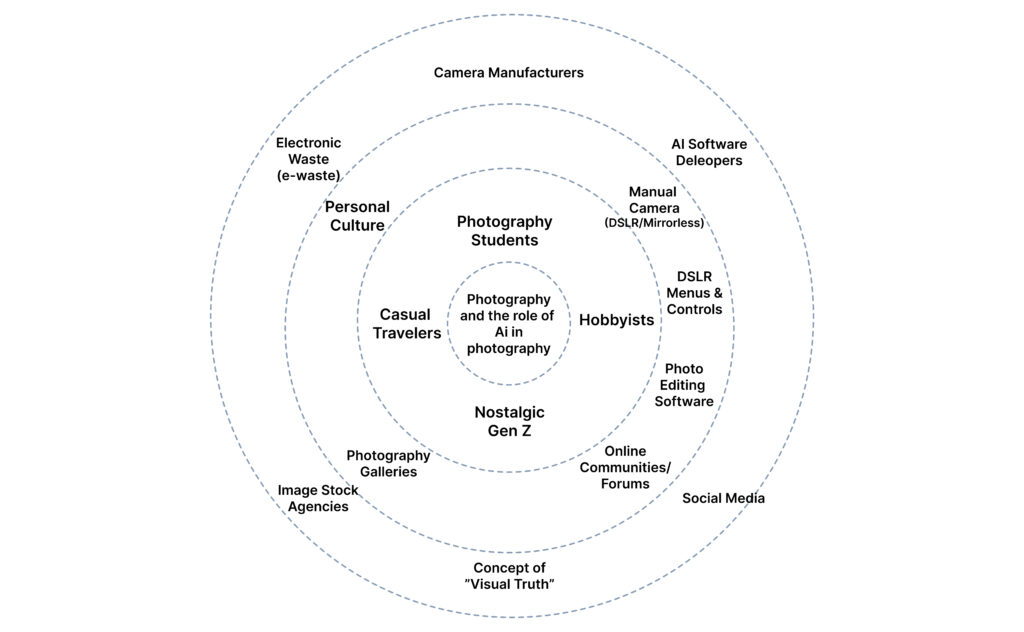

This field research has clarified the conflict I am studying. We have two user groups with opposing definitions of “Control”:

- Group A (Evolution): Believes in Selective Control. As long as they control the technical settings (Manual Mode), they feel like the artist—even if AI helps generate the background.

- Group B (Resistance): Believes in Total Control. They reject machine intervention entirely because they believe value comes from physical truth and human labor.

Refining the Question:

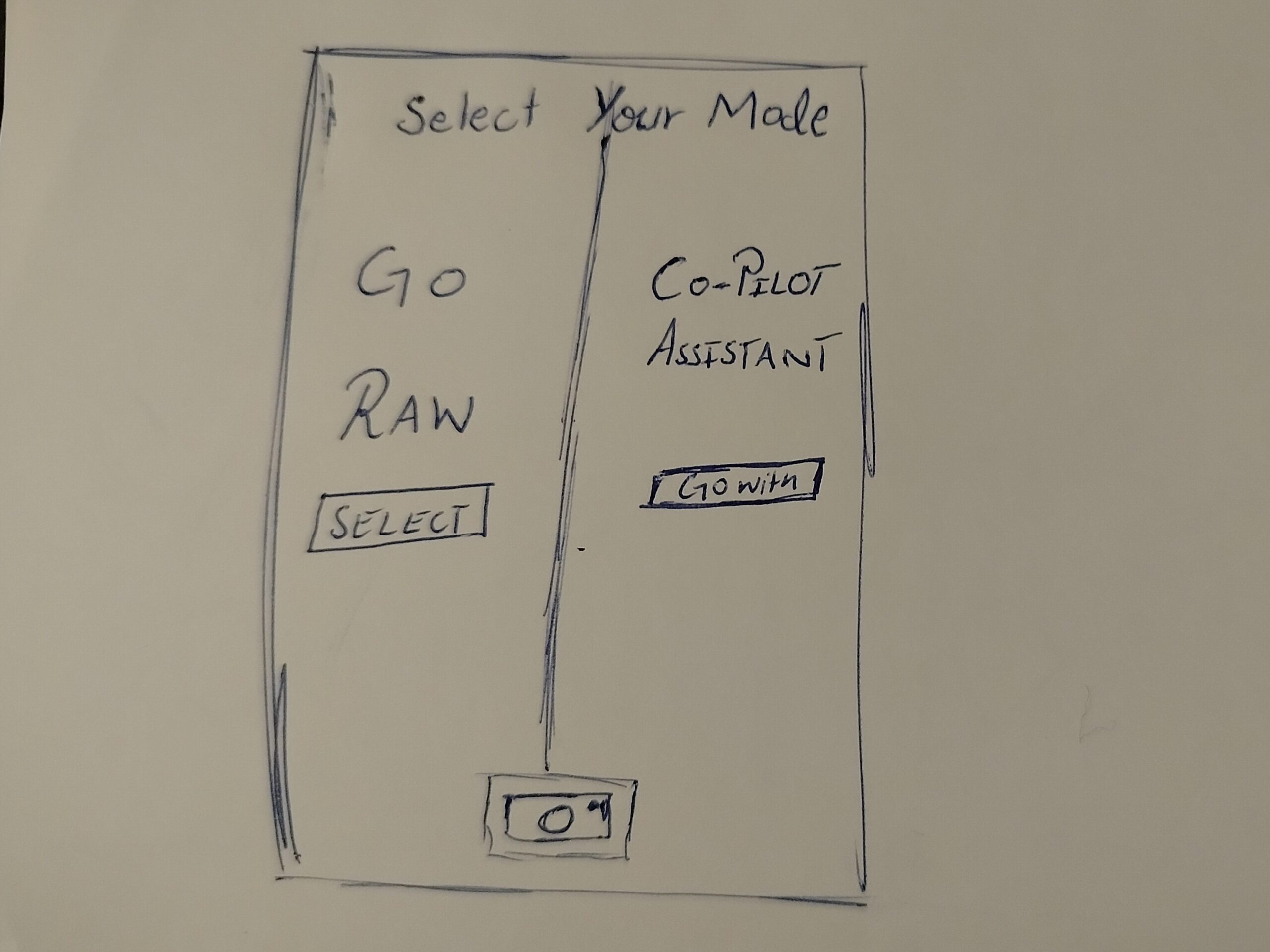

If we allow AI to take over parts of the process (as Group A accepts), do we eventually destroy the “Value” that Group B cherishes? Or is controlling the “Settings” enough to keep the human soul alive?

Next Steps

To answer this, I need to find the middle ground. Next week, I am interviewing “Hybrid” creators—people who use both manual film cameras AND high-tech Cameras. To see how they navigate the balance between Control and Automation.