Physically Based Rendering (PBR) has become the standard for modern 3D graphics because it produces materials that look believable under any lighting conditions. Unlike older empirical models like Phong or Lambert, PBR follows real-world physical principles, ensuring that metal reflects like metal and plastic looks like plastic, regardless of environment. In web based 3D engines like Three.js and Babylon.js, PBR is implemented as a complete pipeline from texture authoring to shader evaluation.

Textures

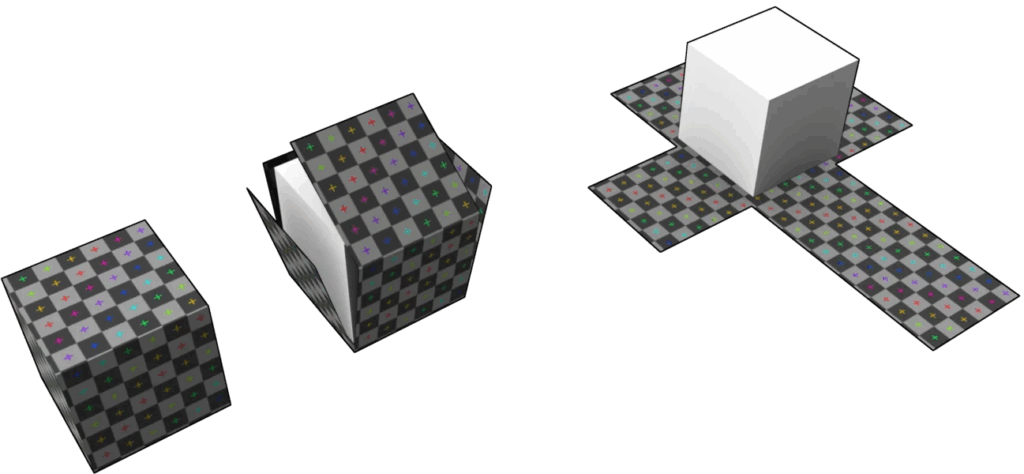

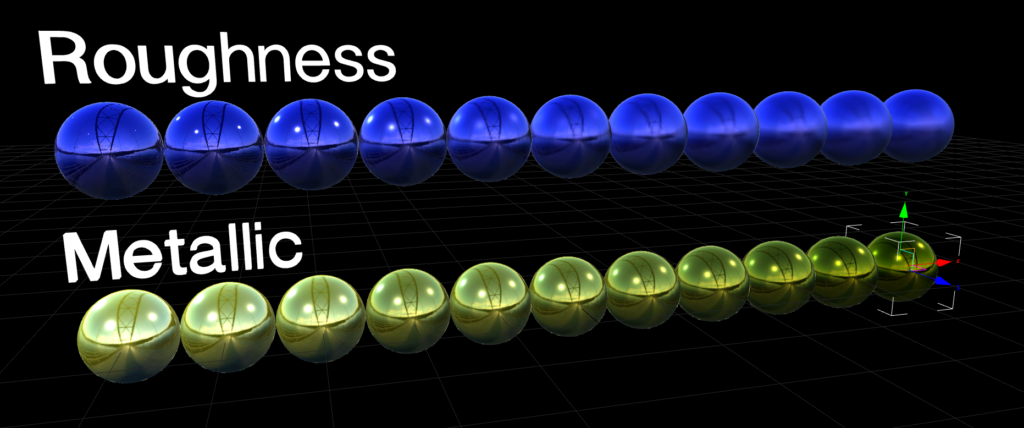

At the heart of PBR are a set of core input textures that describe material properties. They are base color (also known as albedo), metallic, roughness, normal, ambient occlusion (AO) and emission. The base color map provides the color without any light interaction. The metallic map determines whether a surface behaves like metal or not, using a value ranging from 0 to 1. Roughness controls the surface roughness, where a low value would represent a shiny pool ball with reflections and a high value a very dusty rock where light is evenly spread with no visible reflections. Normal maps add fine surface detail that doesn’t need to be modeled such as tiny cracks or even small bolts. Ambient occlusion darkens crevices and simulates indirect shadow. Finally, emissive maps make parts of the surface glow independently of lights.

These input textures are fed into a Bidirectional Reflectance Distribution Function (BRDF) also most commonly known as the microfacet model. Microfacets assume the surface is made of tiny mirror-like facets oriented randomly according to a normal distribution function (NDF), typically GGX. The BRDF combines three terms, the Fresnel effect (view-dependent reflectivity), the geometry term (microfacet shadowing and masking) and the NDF (facet distribution based on roughness).

For metals, the diffuse component is near zero and the specular uses the base color as a tinted mirror, changing the tint of the metal. For non-metals, specular is achromatic white and diffuse uses the base color. This energy-conserving model ensures no more light is reflected than hits the surface.

IBL

To get convincing reflections and indirect light, PBR usually relies on Image-Based Lighting. IBL uses HDRIs to represent light coming from all directions around the object. These maps are prefiltered into different levels of blur so that rough materials sample a more blurred version of the environment. Combined with the BRDF, this allows metals and glossy plastics to respond realistically to their surroundings without the need for many real-time lights in the scene.

Authoring

Authoring PBR textures for the web demands discipline to avoid common pitfalls. Tools like Substance Painter can generate these maps, but exporting them the wrong way can ruin the look. The base color needs to be in sRGB color space, metallic, roughness, normal and AO need to be in a linear color space (non-color data). Roughness should range from around 0.05 for a mirror to 0.9 for matte materials, avoiding the extremes that could look jarring. Metallic usually only uses binary, so either 0 or 1, no in between. There are some exceptions though for materials like metallic paint. Normal maps have to match the tangent space that is expected by the engine. Overly bright HDRIs can wash out scenes and mismatched roughness scales between tools can lead to reflections looking wrong.

Performance Considerations

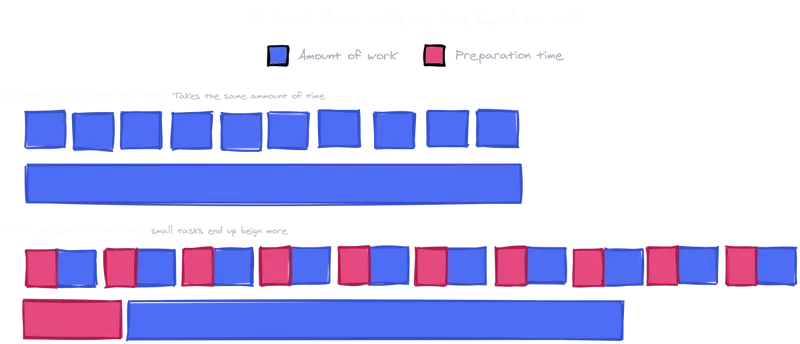

Performance is a key consideration because PBR shaders are more complex than simple Blinn-Phong (a simple lighting model). Each fragment requires multiple texture lookups, Fresnel calculations, and environment sampling, which can bottleneck on limited devices. Web engines approximate where it is possible. Three.js for example uses a simplified BRDF without full multiple scattering, while Babylon.js’ PBRMaterial supports advanced features like clearcoat but allows disabling reflections or IBL for speed. Optimizations include reducing texture resolution, baking some maps into others such as AO into roughness, and using lower-resolution maps for mobile.

The strength of PBR on the web is portability. Materials authored once look consistent across engines and devices. By mastering the input textures, microfacet BRDF and IBL pipeline, developers can create production quality visuals without proprietary tools. The cost is higher shader complexity but targeted optimizations keep it viable even on modest hardware.

SOURCES

1: https://learnopengl.com/PBR/Theory

2: https://www.cg.tuwien.ac.at/research/publications/2017/OPPITZ-2017-3DM/OPPITZ-2017-3DM-report.pdf

3: https://archdesignmart.in/the-ultimate-guide-to-pbr-materials-understanding-physically-based-rendering/

4: https://www.mathematik.uni-marburg.de/~thormae/lectures/graphics1/code/WebGLShaderMicrofacetBrdf/ShaderMicrofacetBrdf.html

5: https://sbcode.net/threejs/environment-maps/