One of the most eye-opening parts of my research so far has been experiencing live audiovisual events in person. Reading about VJing, visual music or animation can explain technical possibilities, but it cannot replicate the energy, unpredictability or emotional resonance of a crowd. Field trips have become essential not just for inspiration, but for understanding the audience as an active participant in the visual system.

Being physically present in these environments makes clear that audiovisual design does not exist in isolation. It is always embedded in space, shaped by sound pressure, lighting conditions, movement and social dynamics. The same visual can feel completely different depending on the crowd, the venue and the collective mood.

A particularly inspiring encounter was meeting Julian Horus, a VJ I discovered on Instagram who is doing work very similar to what I want to accomplish. He thinks in ways I do: live VJing in a way that mirrors a DJ’s presence, sharing the stage rather than putting sound in the spotlight and visuals in the back corner of the room, and thinking playfully and experimentally. For example, he rigged a video game controller to TouchDesigner so he can join the crowd, feel the energy and “play” the visuals from within. Seeing his approach confirmed for me that it is possible to design visuals that are both interactive and part of the performance, not just accompaniment.

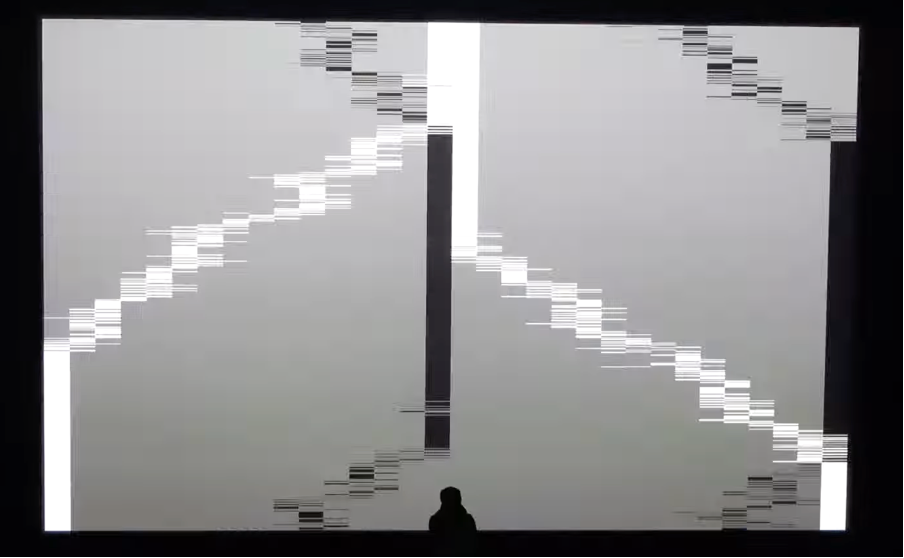

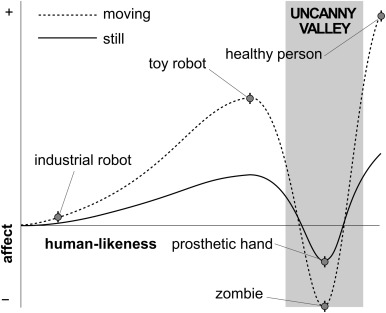

I then had the chance to meet him at an event in the Sub event space in Graz. I went there to dance and experience the attempt at symbiosis between sound and visuals firsthand. It was inspiring to see how the crowd responded to visuals which were sometimes even influenced by their movements, observed via a camera connected to his TouchDesigner composition. Experiencing this dynamic feedback loop emphasized how the audience itself becomes a data source within the system. Movement, density and collective rhythm feed back into the visuals, which in turn influence how people move and interact. This circular relationship reinforces the idea that audiovisual performance is a shared process rather than a one-directional presentation.

Alongside this, I started testing VJ Software myself, namely Resolume and experimenting with first visualizations for club spaces. These exercises are exploratory rather than outcome-driven. There are no finished products yet, but they allow me to test how my visual language behaves in environments closer to live performance.

Another important reference in my practical exploration has been Arkestra as a visual performance tool. In contrast to large, industry-standard software environments such as Resolume, Arkestra appears to be developed on a much smaller scale and is likely created and maintained by a single individual or a very small team. This is reflected in its accessibility, pricing and overall approach, which feels more approachable for beginners and independent designers. What makes Arkestra particularly interesting to me is its focus on immediacy and experimentation rather than technical complexity. The software allows for quick visual results without requiring deep programming knowledge, lowering the threshold for entry into audiovisual performance. This makes it suitable not only for live experimentation but also for learning how visuals react to sound through hands-on play. I have been actively testing Arkestra by using material from my FH projects as source content. Existing animations and visual studies are re-contextualized within Arkestra’s effects and reactive systems, allowing me to generate new visual outcomes from already familiar material. This process has been valuable in understanding how different tools reinterpret the same visual language and how sound-driven manipulation can transform meaning and atmosphere. Working with Arkestra has also highlighted the importance of tool choice in shaping creative decisions. Its limitations are not obstacles but productive constraints, encouraging intuitive exploration rather than polished perfection. Through this process, I am learning how beginner-friendly tools can still support meaningful experimentation and contribute to the development of a personal audiovisual practice.

Another source of insight came from conversations with my brother, co-owner of the CxD label. I shared my idea of rethinking how VJs are placed in a party environment, creating a new narrative for sound and visuals. I also shared my vision for a Face2Face event, where the VJ and DJ are positioned in front of each other, actively “dancing” together through pressing buttons in both music and visuals.

Depending on the story intended for the event, this could manifest as a playful one-on-one or a synchronized performance. We agreed this is pioneering work, which increased my excitement for this journey.

Hands-on experimentation has also been crucial. In first-semester projects such as the “Big Mouth Project”, I explored reactive visuals by animating a cube that responds to music through expressions. I combined rhythm, chance and intentionally placed keyframes to highlight moments of calm or intensity in a track. This manual approach revealed how subtle timing decisions can significantly alter emotional perception. Even small delays, accelerations or pauses change how visuals feel in relation to sound, reinforcing the importance of sensitivity and intuition in audiovisual design. Over time, these experiments could provide material to observe audience reactions, closing the feedback loop and generating new insights for design.

Seeing my heroes in action, both in person and through their work online, has been highly motivational. Teachers such as Markus Zimmermann reminded me of the value of seeking out people whose practice aligns with my own aspirations. Witnessing their mindset and creative process makes my goals feel tangible and achievable.