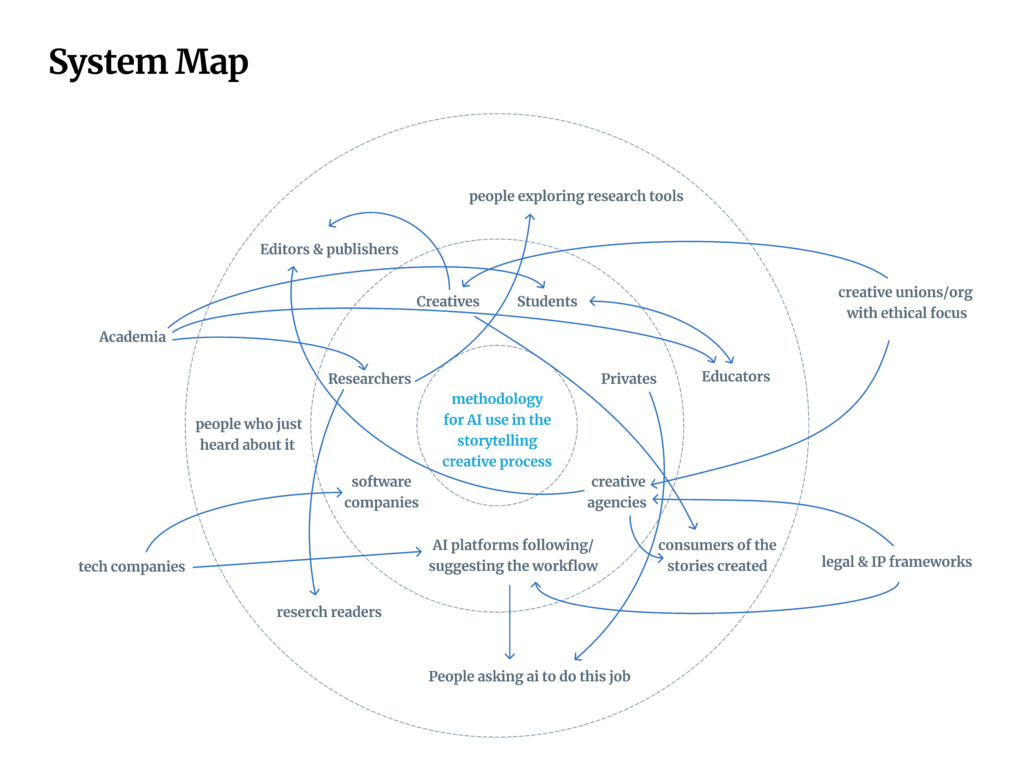

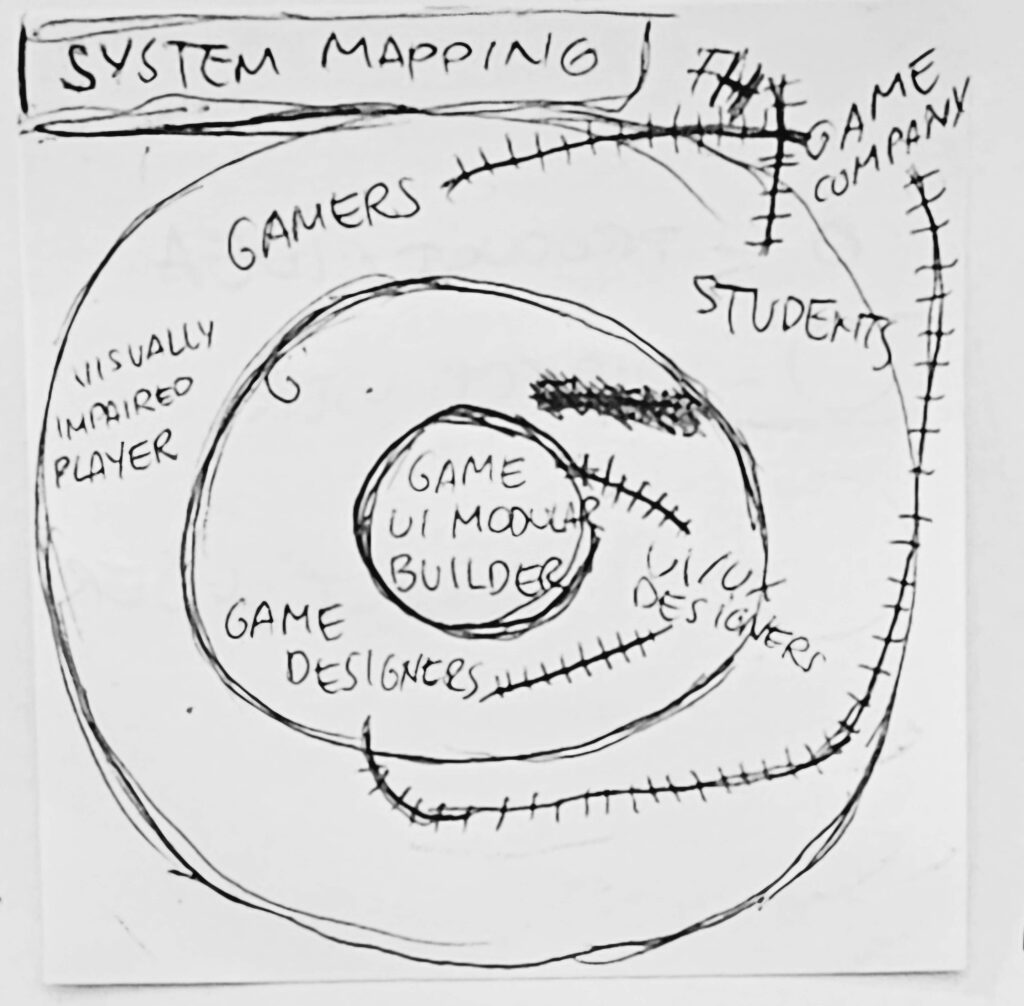

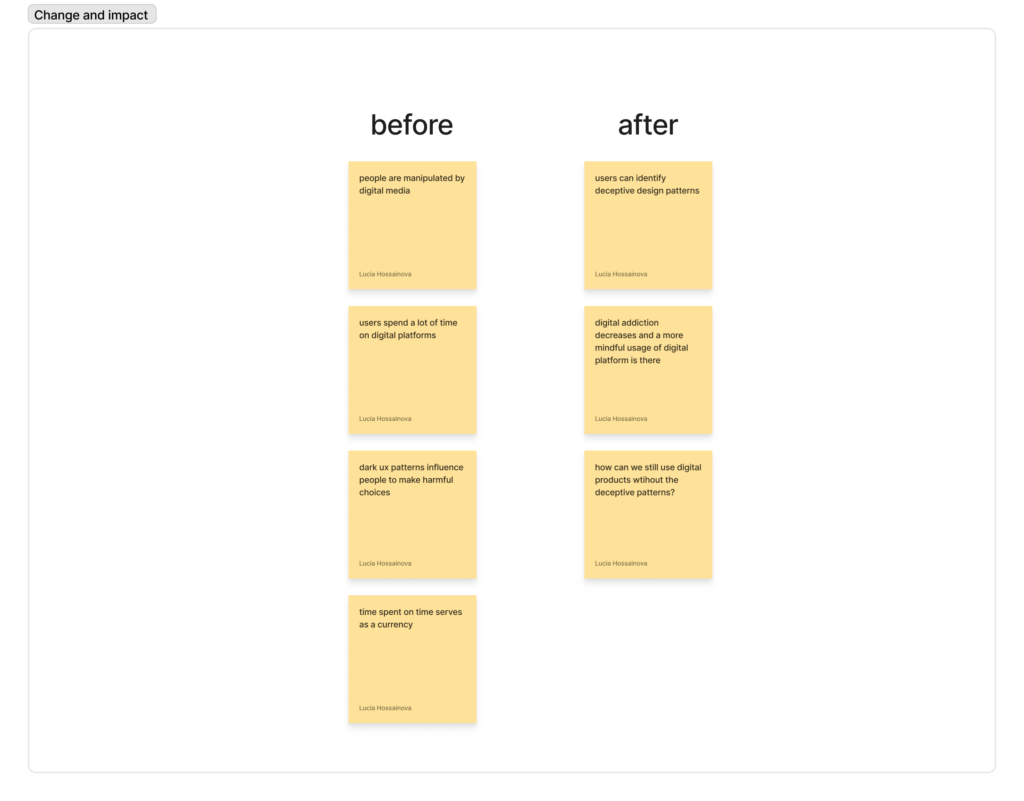

After outlining the actors and affected people and sectors within a possible new direction in the creative process, let’s try to visualize what the current state of things look like and what what it could become after the introduction of a new methodology.

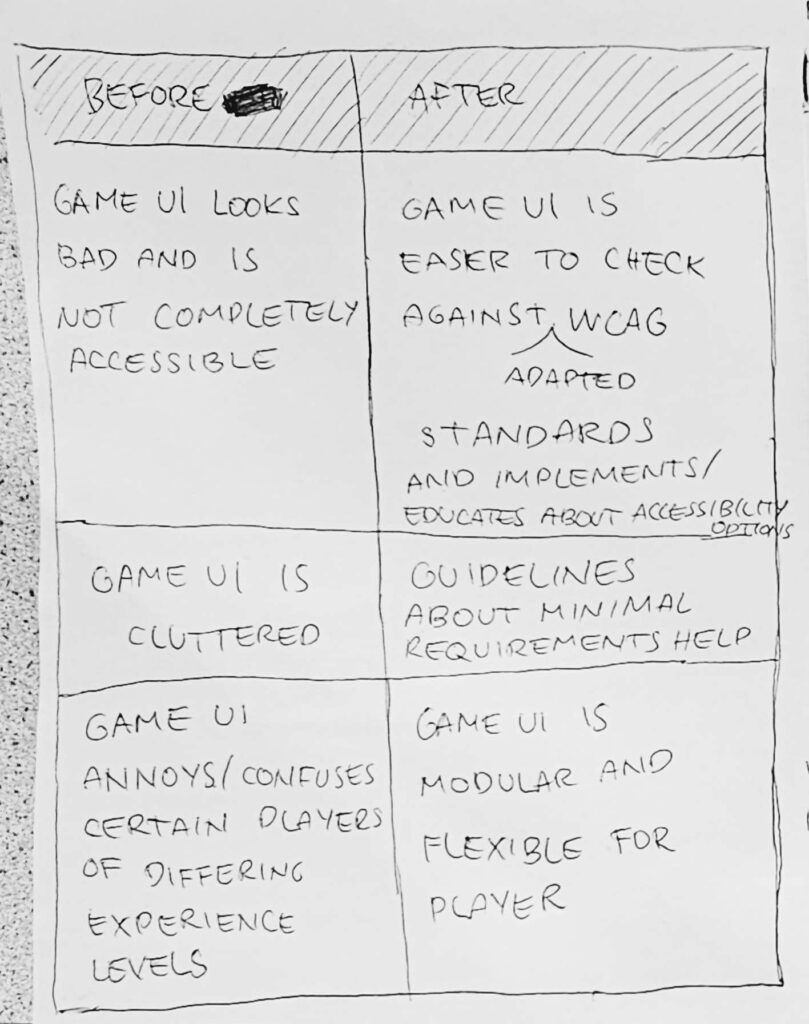

Before

- involvement of AI in all stages of storytelling generation/ideation/creation

- no precise and documented knowledge on how to do it in this precice field

- the prompts to ai tool not well written, without puropose

- Too many requests = extreme waste and environmental impact

- Unethical use of AI

After

- easy to use / step by step methodology to understand and use a correct workflow in the creation process

- Aware and ethical use of AI tools

- Less prompts / requests sent with more efficient answers

- Open a new dialogue

- Set a standard or starting point for the field

Right now, most creatives are figuring this new directions out alone. A structured methodology could change that and optimize our workflow without making us feel left out of the process. It’s not just about better prompts, yet it’s about reflecting on how an entire field relates to a tool that is now part of our everyday life.